1. Introduction

At present, hyperspectral images (HSIs) are attracting increasing attention. With the fast iteration of hyperspectral sensors, researchers can easily collect a large amount of HSI data having high spatial resolution and multiple bands that form high-dimensional features, such as complex and fine geometrical structures [

1,

2]. These characteristics encourage the wide use of HSIs for various thematic applications, such as military object detection, precision agriculture [

3], biomedical technology, and geological and terrain exploration [

4,

5]. As one of the basic methods for the above applications, HSI classification plays an important role and has made certain developments in the past few decades [

6].

Many classic machine learning methods can be directly applied to the classification of HSIs, such as naive Bayes, decision trees, K-nearest neighbor (KNN), wavelet analysis, support vector machines (SVMs), random forest (RF), regression trees, ensemble advancement, and linear regression [

7,

8,

9]. However, these methods either treat the HSI as a combination of several hundreds of gray images and extract the corresponding features for classification or use only spectral features for classification, thus producing unsatisfactory results [

6].

Recently, classification methods for HSIs based on sparse representation have attracted the attention of researchers [

10]. Many well-performing sparse representation classification (SRC) methods have been developed. SRC assumes that each spectrum can be sparsely represented by spectra belonging to the same class and then obtains a good approximation of the original data through a corresponding algorithm [

11,

12]. Therefore, more and more scholars have used the sparse representation technique to conduct HSI classification. Due to the irrationality of the traditional joint KNN algorithm for setting the same weights in the same region, Tu et al. proposed a weighted joint nearest neighbor sparse representation algorithm [

13]. Later, a self-paced joint sparse representation (SPJSR) model was proposed. The least-squares loss used in the classical joint sparse representation model was changed to a weighted least-squares loss, and a self-paced learning strategy was employed to automatically determine the weights of the adjacent pixels [

14]. Because different scales of a region in an HSI contain complementary and correlated knowledge, Fang et al. proposed an adaptive sparse representation method using a multiscale strategy [

15]. To make full utilization of the spatial correlation of HSI and improve the classification accuracy, in [

16], Dundar et al. proposed a multiscale superpixel and guided filter-based spatial-spectral HSI classification method. However, when the sample size is small, SRC will ignore the cooperative representation of other categories of samples, resulting in an incomplete dictionary for each category of samples, which produces a larger residual in the results. To alleviate this problem, the cooperative representation method (CRC) was presented by researchers. Compared to SRC, the

norm used by the CRC not only has discriminability like that of SRC, but also has lower computational complexity. Using the collaborative representation, Jia et al. proposed a multiscale superpixel-based classification method [

17]. To further improve the accuracy of HSI classification, Yang et al. proposed a joint collaborative representation method using a multiscale strategy and a locally adaptive dictionary [

18]. Considering the correlation between different classes of HSIs, with the assistance of Tikhonov regularization, a discriminative kernel collaborative representation method was proposed by Ma et al. [

19] that utilized nuclear collaborative representation for HSI classification. More recently, low rank representation (LRR) has been studied by scholars in the field of HSI classification. To fully exploit the local geometric structure in the data, Wang et al. proposed a novel LRR model with regularized locality and structure [

20]. Meanwhile, a new self-supervised low-rank representation algorithm was proposed by Wang et al. for further improvement of HSI classification [

21]. Moreover, Ding et al. proposed a sparse low-rank representation method that relied on key connectivity for HSI classification [

22]. This study combined low-rank representation and sparse representation while retaining the connectivity of key representations within classes. To decrease the impact of spectral variation on subsequent spectral analyses, Mei et al. conducted anti-coagulation research within coherent spatial and spectral low-rank representation, which effectively suppressed the class spectral variations [

23].

As one of the hottest feature extraction techniques, deep learning has made excellent progress in computer vision and image processing applications [

24,

25,

26,

27]. Currently, deep learning is also becoming more popular in the field of HSI classification [

28]. HSI data consist of multi-dimensional spectral cubes that contain much useful information, so the intrinsic features of the image are easily extracted by deep learning techniques [

29]. For instance, the currently popular convolutional neural network (CNN) model has produced excellent HSI classification results [

30,

31]. In addition, some improved HSI classification methods have been proposed based on deep learning. For instance, by combining active learning and deep neural networks, Liu et al. proposed an effective framework for HSI classification, where the deep belief network (DBN) was employed to extract the deep features hidden in the spectral dimension [

32]. Then, a learning classifier was used to refine those training samples to boost their quality. Zhong et al. further enhanced the DBN model and proposed a diversified DBN model [

33]. In their design, the pre-training and tuning procedures of the DBN were regularized to achieve better classification accuracy. Moreover, 1D CNN [

34,

35] and 1D generative antagonistic networks [

36,

37] were also employed to describe the spectral features of the HSI. However, deep learning methods also have some problems; for example, they usually need many training samples, and the extracted features are not always interpretable. Therefore, the trend of subsequent research is to combine traditional feature extraction methods with deep learning methods to obtain more accurate classification results.

Another, more powerful classification technique for the HSI is the kernel method, especially the composite kernel (CK) method. In the actual classification process, samples in the original space are often not linearly separable [

38]. Therefore, to solve the problem of linear inseparability, the kernel method is used to map the samples to a higher dimensional feature space so that the samples are linearly separable [

39]. Certainly, the performance of the kernel method depends largely on the kernel selection. For example, the Gaussian kernel and the polynomial kernel are common kernels, and they are often not flexible enough to reflect the comprehensive features of the data [

40]. Moreover, with increasing requirements of classification accuracy, a single kernel with a specific function cannot deliver a satisfactory result [

41]. To solve this problem, the CK was proposed. It combines two or more different features, such as the global and local kernel, or the local kernel and spectral kernel, into a kernel composition framework for HSI classification [

42]. Sun et al. proposed a CK classification method using spatial-spectral information and abundance information in the HSI [

43]. For intrinsic image decomposition of the HSI, Jin et al. put forward a new optimization algorithm, and the CK learning method was then utilized to combine reflectance with the shading component [

44]. Furthermore, Chen et al. proposed a spatial-spectral composite feature broad learning system classification method [

45]. This method inherits the advantages of a broad learning system and is well-suited to multi-class tasks. As the most widely-used classier, SVM can also bring excellent classification accuracy to the HSI [

46]. Huang et al. proposed an SVM-based method for HSI classification [

47]. In this work, weighted mean reconstruction and CKs were combined to explore the spatial-spectral information in the HSI. With the continuous development of superpixel segmentation technology, Duan et al. further improved edge-preserving features by considering the inter- and intra-spectral properties of superpixels and formed one CK for the spectral and edge-preserving features [

48]. Because the HSI contains many spectral bands, mapping the high-dimensional data to achieve improved classification speed has been of great concern in recent years. To address this problem, Tajiri et al. proposed a fast patch-free global learning kernel method based on a CK method [

49]. Compared with the original single-kernel method, the CK function has the following obvious advantages: (1) it maps the data into a complex nonlinear space to extract more useful information and make the data separable; (2) it provides the flexibility to include multiple and multimodal features.

Different from a CK in which only one kernel function is constructed to contain both spatial and spectral information, the spatial-spectral kernel (SSK) constructs two clusters in kernel space, thus capturing the hidden manifold in the HSI [

50]. For example, the spatial-spectral weighted kernel embedded manifold distribution alignment method constructs a complex kernel with different weights for the spatial kernel and the spectral kernel [

51]. The spatial-spectral multiple-kernel learning method utilizes extended morphological profiles (EMPs) as spatial features and the original spectra as spectral features. In this way, multiscale spatial and spectral kernel methods are formed [

52]. In addition, the joint classification methods for HSIs based on spatial-spectral kernels and multi-feature fusion are especially suitable for a limited number of training samples [

53]. Generally, the CK method and the SSK method adopt square windows or superpixel technology to extract spatial information. However, both methods may misclassify the pixels at the class boundary. To alleviate this problem, several methods for selecting adaptive neighborhood pixels to construct the spatial-spectral kernel have been proposed, which further improved classification performance [

54,

55]. For those CK or SSK methods, determining the weights of the base kernels is another difficult and urgent challenge. Therefore, many scholars have proposed multiple kernel learning methods, where the core idea is to obtain a linear optimal combination of those base kernels using an optimization algorithm [

56,

57,

58].

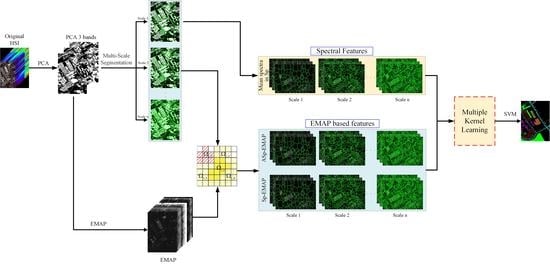

In this study, following the line of the multiple kernel learning framework, we propose a novel multiscale, adjacent superpixel-based embedded multiple kernel learning method with the extended multi-attribute profile (MASEMAP-MKL) for HSI classification. The proposed method makes full use of superpixels and the EMAP to exploit multiscale and multimodal spatial and spectral features for the generation of multiple kernels. For the spatial information, both the superpixel and its first-order neighborhood superpixels are utilized to extract geometric features at different scales and combine the EMAP features to construct different base kernels. Finally, a principal component analysis (PCA)-based multiple kernel learning method is employed to determine the optimal weights of the base kernels. The main contributions of the proposed MASEMAP-MKL method are summarized as follows.

The superpixel segmentation is used to extract geometric structure information in the HSI, and multiscale spatial information is simultaneously extracted according to the number of superpixels. In addition, the spectral feature of each pixel is replaced by the average of all the spectra in its superpixel, which is used to construct a superpixel-based mean spectral kernel.

The EMAP features, together with the multiscale superpixels and the adjacent superpixels obtained above, are used to construct the superpixel morphological kernel and the adjacent superpixel morphological kernel. At this stage, multiscale features and multimodal features are fused together to construct three different kernels for classification.

The multiple kernel learning technique is used to obtain the optimal kernel for HSI classification, which is a linear combination of all the above kernels.

An experimental evaluation with two well-known datasets illustrates the computational efficiency and quantitative superiority of the proposed MASEMAP-MKL method in terms of all classification accuracies.