Both approaches were simulated using multihop grid topologies from 16 nodes to 81 nodes, including one controller node and one sink node. We also simulated the scenario without prediction to use it as a baseline. In this case, all WSN nodes send their energy consumption to the SDN controller every 60 s. In parallel, we run a temperature monitoring application where every node sends a temperature sample (2 bytes message) every 60 s as well. Furthermore, all nodes wait 60 s plus a random value between 0 and 60 s before initializing the prediction algorithm and the temperature application. We use the random value to prevent all nodes from transmitting energy and temperature packets at the same time, which would create packets collisions.

The metrics to evaluate the energy consumption prediction proposals include prediction performance and network performance metrics. These metrics are prediction accuracy, total delay, total delivery rate, packets overhead, and energy consumption. The total delay is the average time the packets spent to reach the destination. The prediction accuracy is evaluated in two ways: prediction error and valid predictions rate. The prediction error is the average error of all the predictions during the test. The valid predictions rate is the number of predictions with an error lower than

divided by the total predictions performed. We chose

based on the average estimation error we obtained using our energy consumption model (

Section 5.1). The total delivery rate is calculated by dividing the number of packets successfully received by the number of packets sent. The packets overhead is quantified as the number of packets per minute. The energy consumption is the average energy consumption per minute of all nodes, excluding the sink node and the controller node.

The total delay, total delivery, and overhead metrics are classified by type of packet. Control packets are those related to the IT-SDN control plane, data packets are the application packets sent to the sink, and energy packets are the packets related to the prediction mechanisms and energy information. The graphics in this section show the average results calculated among replications.

Table 3 shows the abbreviations used for each scheme, and

Table 4 and

Table 5 summarize the simulation and energy consumption parameters, respectively.

Table 6 represents the cost per transitions in microjoules, i.e., matrix

B in Equation (

3). The cases with cost zero are transitions that never occurred or that we were not able to measure with our equipment. Last, according to our tests, the initial state is always Processing. Thus, the initial vector

.

Results Analysis

Figure 4a depicts the percentage of valid predictions and

Figure 4b depicts the average prediction error metric. The Markov-PC scheme obtained the highest percentage of valid predictions and the lowest prediction error, which means it is the most effective to reduce energy packets updates. Moreover, we observed that the topology size has more impact on the Markov-PC scheme than on the Markov-Node scheme. In terms of percentage of valid predictions, the average results in the Markov-scheme decrease

, from 16 nodes to 81 nodes, while for the Markov-Node scheme the decrease is

.

Figure 5 depicts the average energy consumption including the scenario without prediction. Comparing both Markov chain schemes with the No-Prediction scheme, the Markov-PC scheme reduces the average energy consumption of the networks with sizes from 16 to 49 nodes, whereas the Markov-Node scheme increases the average energy consumption for all sizes. This means that the Markov-Node scheme energy consumption trade-off is not positive: it spends more energy than the amount it is able to save. On the other hand, the Markov-PC scheme, the one with the better prediction performance, saves approximately 1% of energy consumption in the topologies between 16 and 49 nodes but increases this metric 1% for 64 nodes and 5% for 81 nodes. If we go back to the percentage of valid predictions in

Figure 4a and compare those results with the energy consumption results for Markov-PC scheme, we can say that it is necessary over 40% of valid predictions to save energy.

Figure 6a,b depicts the control and energy packets overhead, respectively. Regarding control packets overhead, the results show similar values for all the schemes in topologies from 16 to 36 nodes. This means that the prediction schemes implementation do not increase the control overhead for small networks. Moreover, we observed that the Markov-PC reduces the control overhead in around

and

for 49, 64, and 81 nodes. On the other hand, the Markov-Node scheme increases the control overhead between

and

for topologies with 64 and 81 nodes. This increase is related to the control packets delivery rate, which is analyzed further below.

Then, the results in

Figure 6b show that the Markov-Node scheme reduces the energy packets traffic while the Markov-PC scheme increases it. Even though the Markov-PC scheme has a lower prediction error and a higher percentage of valid predictions than the Markov-Node scheme, the increase is because in the Markov-PC scheme the controller replies every energy information update with the energy consumption prediction result, but in the Markov-Node scheme this is not necessary. This means that using the Markov-PC we need

or more valid predictions to at least equal the No-Prediction scheme. On the other hand, around

of valid predictions (

Figure 4a) in the Markov-Node scheme are enough to reduce up to

of the energy packets traffic.

Figure 7a–c depicts the delivery rate for control, data, and energy packets, respectively. From those figures, we observed that the Markov-PC scheme equals or improves the delivery rate with respect to the No-Prediction scheme results. For example, in the case of the energy packets delivery rate, the result for the Markov-PC are between

and

over the No-Predictions scheme.

On the other hand, using the Markov-Node scheme the delivery rate is below the No-Prediction scheme results in most cases. This is interesting since the Markov-Node scheme obtained the lowest energy packets overhead, with up to less than the Markov-PC scheme. To understand this, we have to remember that in WSNs all packets share the same band and channel in some cases. Despite the Markov-Node obtained the lowest energy packets overhead, it also obtained the highest control packets overhead, which can affect the energy packets delivery rate. Furthermore, in the Markov-Node scheme all energy packets go upward (i.e., from sensor nodes to the controller) while in the Markov-PC scheme half of them go upward and the other half go downward (i.e., from the controller to sensor nodes). This helps to alleviate the congestion of the routes hence the delivery rate.

The control packets delivery rate results also helped us to understand the increase in the control overhead using the Markov-Node scheme for 64 and 81 nodes noticed before (

Figure 6a). In

Figure 7a, we observed that for topologies from 16 to 49 nodes, the decrease is less than

, while for 64 and 81 nodes, the decrease is around

and

, which is a match to the increase in the control packets overhead. These metrics are related since the overhead is caused by the retransmissions for the lost packets. A lower delivery rate is a sign that more retransmissions were required.

Figure 8a–c depicts the average delay for control, data, and energy packets, respectively. The first we observed is that the Markov-Node scheme obtained the highest delay in all the packet types, with a significant difference in the energy packet. In this case, the delay is from

to

higher than the delay in the No-Prediction scheme. Unlike the Markov-Node scheme, the Markov-PC scheme was able to maintain the control and data packets delay with respect to the No-Prediction scheme, but also it was able to reduce the delay up to

in the case of the energy packets.

The increase in the delay using the Markov-Node scheme is caused by its processing overhead. As shown in

Figure 9, the Markov-Node scheme doubles the processing overhead of the Markov-PC and No-Prediction schemes. This processing overhead delays energy packets forwarding. Furthermore, as energy and data updates have the same period, it also delays data packets forwarding. The Markov-PC and No-Prediction schemes have similar processing overheads. Thus, recalling the overhead and delivery rate results, despite the fact that the Markov-Node scheme reduces the number of energy packets in the network, the high delay caused by processing overhead could be affecting both energy packets and data packets delivery rate.

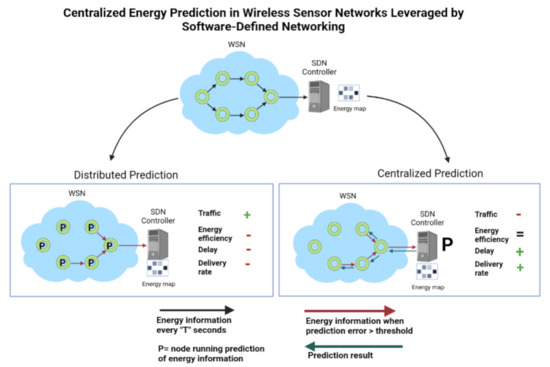

Summarizing, the Markov-PC scheme overcomes the Markov-Node scheme in all the metrics evaluated, except for the energy packets overhead. This means, executing the prediction in the SDN controller we obtain better accurate prediction and delivery rate, and less energy consumption, control packets overhead, delay and sensor nodes’ processing overhead. Then, with respect to the No-Prediction, the Markov-PC scheme reduces the energy consumption for topologies between 16 nodes and 49 nodes and increases it for topologies between 64 and 81 nodes. We also observed the Markov-PC scheme increases the energy packets delivery rate and reduces their delay. Moreover, in terms of control and data packets delivery rate and delay, the Markov-PC and No-Prediction scheme obtained a similar performance.

Thus, considering the whole picture, the Markov-PC scheme obtained better performance than the Markov-Node scheme, but also it provides benefits in terms of energy efficiency and network performance if compared with the No-Prediction scheme.