Shadow Enhancement Using 2D Dynamic Stochastic Resonance for Hyperspectral Image Classification

Abstract

:1. Introduction

2. Materials and Methods

2.1. Dynamic Stochastic Resonance

2.2. Convolutional Neural Network

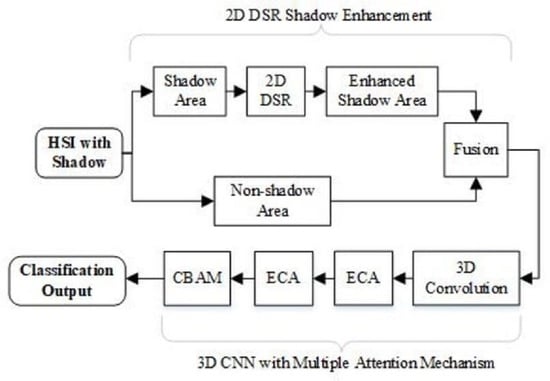

2.3. Two-Dimensional DSR Shadow Enhancement for Hyperspectral Image Classification by CNN Embedded with Multiple Attention Mechanisms

2.3.1. Two-Dimensional Dynamic Stochastic Resonance

2.3.2. Three-Dimensional Convolutional Neural Network with Multiple Attention Mechanisms

2.3.3. The Procedure of the Proposed MAM-3DCNN

3. Experiment

3.1. Dataset

3.2. Parameter Setting

3.2.1. Setup of 2D DSR Parameters

3.2.2. Parameter Setting of MAM-3DCNN

3.3. Experimental Results

3.3.1. Shadow Enhancement by 2D DSR

3.3.2. Classification Results

4. Discussion

4.1. Analysis of 2D DSR Effect on Shadow Enhancement

4.2. The Classification Performance Discussion of Considered Measures

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Ahmad, M.; Shabbir, S.; Roy, S.K.; Hong, D.; Wu, X.; Yao, J.; Khan, A.M.; Mazzara, M.; Distefano, S.; Chanussot, J. Hyperspectral Image Classification-Traditional to Deep Models: A Survey for Future Prospects. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2022, 15, 968–999. [Google Scholar] [CrossRef]

- Wang, C.; Liu, B.; Liu, L.; Zhu, Y.; Hou, J.; Liu, P.; Li, X. A review of deep learning used in the hyperspectral image analysis for agriculture. Artif. Intell Rev. 2021, 54, 5205–5253. [Google Scholar] [CrossRef]

- Yuan, J.; Wang, S.; Wu, C.; Xu, Y. Fine-Grained Classification of Urban Functional Zones and Landscape Pattern Analysis Using Hyperspectral Satellite Imagery: A Case Study of Wuhan. Artif. Intell. Rev. 2022, 15, 3972–3991. [Google Scholar] [CrossRef]

- Zhu, C.; Ding, J.; Zhang, Z.; Wang, J.; Wang, Z.; Chen, X.; Wang, J. SPAD monitoring of saline vegetation based on Gaussian mixture model and UAV hyperspectral image feature classification. Comput. Electron. Agric. 2022, 200, 107236. [Google Scholar] [CrossRef]

- Zeng, J.; Hu, W.; Huang, F. Analysis of Hyperspectral Image Classification Technology and Application Based on Convolutional Neural Networks. In Proceedings of the IEEE International Conference on Computer Science, Fuzhou, China, 24–26 September 2021; pp. 409–414. [Google Scholar] [CrossRef]

- Kaul, A.; Raina, S. Support vector machine versus convolutional neural network for hyperspectral image classification: A systematic review. Concurr Comput. 2022, 34, e6945. [Google Scholar] [CrossRef]

- Bo, C.; Lu, H.; Wang, D. Spectral-spatial K-Nearest Neighbor approach for hyperspectral image classification. Multimed. Tools Appl. 2018, 77, 10419–10436. [Google Scholar] [CrossRef]

- Peng, J.; Li, L.; Tang, Y. Maximum Likelihood Estimation-Based Joint Sparse Representation for the Classification of Hyperspectral Remote Sensing Images. IEEE Trans. Neural Netw. Learn Syst. 2019, 30, 1790–1802. [Google Scholar] [CrossRef]

- Li, H.; Cui, J.; Zhang, X.; Han, Y.; Cao, L. Dimensionality Reduction and Classification of Hyperspectral Remote Sensing Image Feature Extraction. Remote Sens. 2022, 14, 4579. [Google Scholar] [CrossRef]

- Shambulinga, M.; Sadashivappa, G. Supervised hyperspectral image classification using svm and linear discriminant analysis. Int. J. Comput. Appl. 2020, 11, 403–409. [Google Scholar] [CrossRef]

- Jayaprakash, C.; Damodaran, B.B.; Viswanathan, S.; Soman, K.P. Randomized independent component analysis and linear discriminant analysis dimensionality reduction methods for hyperspectral image classification. J. Appl. Remote Sens. 2020, 14, 1. [Google Scholar] [CrossRef]

- Uddin, M.P.; Mamun, M.A.; Hossain, M.A. PCA-based Feature Reduction for Hyperspectral Remote Sensing Image Classification. IETE Tech. Rev. 2021, 38, 377–396. [Google Scholar] [CrossRef]

- Li, S.; Song, W.; Fang, L.; Chen, Y.; Pedram, G.; Benediktsson, J.A. Deep learning for hyperspectral image classification: An overview. IEEE Trans. Geosci. Remote Sens. 2019, 57, 6690–6709. [Google Scholar] [CrossRef] [Green Version]

- Zhao, Y.; Zhang, X.; Feng, W.; Xu, J. Deep Learning Classification by ResNet-18 Based on the Real Spectral Dataset from Multispectral Remote Sensing Images. Remote Sens. 2022, 14, 4883. [Google Scholar] [CrossRef]

- Li, C.; Wang, Y.; Zhang, X.; Gao, H.; Yang, Y.; Wang, J. Deep belief network for spectral-spatial classification of hyperspectral remote sensor data. Sensors 2019, 19, 204. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Zhou, P.; Han, J.; Cheng, G.; Zhang, B. Learning Compact and Discriminative Stacked Auto-encoder for Hyperspectral Image Classification. IEEE Trans. Geosci. Remote Sens. 2019, 57, 4823–4833. [Google Scholar] [CrossRef]

- Ding, Y.; Zhang, Z.; Zhao, X.; Cai, W.; He, F.; Cai, Y.; Cai, W. Deep hybrid: Multi-graph neural network collaboration for hyperspectral image classification. Def. Technol. 2022, in press. [CrossRef]

- Ding, Y.; Zhang, Z.; Zhao, X.; Hong, D.; Cai, W.; Yu, C.; Yang, N.; Cai, W. Multi-feature fusion: Graph neural network and CNN combining for hyperspectral image classification. Neurocomputing 2022, 501, 246–257. [Google Scholar] [CrossRef]

- Ding, F.; Guo, B.; Jia, X.; Chi, H.; Xu, W. Improving GAN-based feature extraction for hyperspectral images classification. J. Electron. Imaging 2021, 30, 063011. [Google Scholar] [CrossRef]

- Abdulsamad, T.; Chen, F.; Xue, Y.; Wang, Y.; Yang, L.; Zeng, D. Hyperspectral image classification based on spectral and spatial information using ResNet with channel attention. Opt. Quantum Electron. 2021, 53, 1–20. [Google Scholar] [CrossRef]

- Zhang, F.; Bai, J.; Zhang, J.; Xiao, Z.; Pei, C. An Optimized Training Method for GAN-Based Hyperspectral Image Classification. IEEE Geosci. Remote S. 2021, 18, 1791–1795. [Google Scholar] [CrossRef]

- Zhang, Q.; Jiang, Z.; Lu, Q.; Han, J.N.; Zeng, Z.; Gao, S.H.; Men, A. Split to Be Slim: An Overlooked Redundancy in Vanilla Convolution. arXiv 2020, arXiv:2006.12085. [Google Scholar] [CrossRef]

- Huang, Y.; Zhang, L.; Huang, C.; Qi, W.; Song, R. Parallel Spectral–Spatial Attention Network with Feature Redistribution Loss for Hyperspectral Change Detection. Remote Sens. 2023, 15, 246. [Google Scholar] [CrossRef]

- Shi, C.; Sun, J.; Wang, T.; Wang, L. Hyperspectral Image Classification Based on a 3D Octave Convolution and 3D Multiscale Spatial Attention Network. Remote Sens. 2023, 15, 257. [Google Scholar] [CrossRef]

- Liu, X.; Wang, H.; Meng, Y.; Fu, M. Classification of Hyperspectral Image by CNN Based on Shadow Area Enhancement through Dynamic Stochastic Resonance. IEEE Access. 2019, 7, 134862–134870. [Google Scholar] [CrossRef]

- Zhou, L.; Ma, X.; Wang, X.; Hao, S.; Ye, Y.; Zhao, K. Shallow-to-Deep Spatial-Spectral Feature Enhancement for Hyperspectral Image Classification. Remote Sens. 2023, 15, 261. [Google Scholar] [CrossRef]

- Zhou, J.; Zeng, S.; Xiao, Z.; Zhou, J.; Li, H.; Kang, Z. An Enhanced Spectral Fusion 3DCNN Model for Hyperspectral Image Classification. Remote Sens. 2022, 14, 5334. [Google Scholar] [CrossRef]

- Qi, Y.; Yang, Z.; Sun, W.; Lou, M.; Lian, J.; Zhao, W.; Deng, X.; Ma, Y. A Comprehensive Overview of Image Enhancement Techniques. Arch. Comput. Method Eng. 2022, 29, 583–607. [Google Scholar] [CrossRef]

- Yu, T.; Zhu, M. Image Enhancement Algorithm Based on Image Spatial Domain Segmentation. Comput. Inform. 2021, 40, 1398–1421. [Google Scholar] [CrossRef]

- Wang, Y.; Wang, Y.; Han, Q.; Wang, Y.; Li, Y.; Han, Q.; Li, Y.; Li, Y. Low Illumination Image Enhancement based on Improved Retinex Algorithm. J. Comput. 2022, 33, 127–137. [Google Scholar] [CrossRef]

- Deng, W.; Liu, L.; Chen, H.; Bai, X. Low Infrared image contrast enhancement using adaptive histogram correction framework. Optik 2022, 271, 170114. [Google Scholar] [CrossRef]

- Shao, P.; Yang, L.; Li, X. Finite impulse response low-pass digital filter based on particle swarm optimization for image denoising. Wirel Commun. Mob. Comput. 2021, 20, 41–47. [Google Scholar] [CrossRef]

- Ding, Y.; Zhao, X.; Zhang, Z.; Cai, W.; Yang, N.; Zhan, Y. Semi-Supervised Locality Preserving Dense Graph Neural Network with ARMA Filters and Context-Aware Learning for Hyperspectral Image Classification. IEEE Trans. Geosci. Remote Sens. 2022, 60, 1–12. [Google Scholar] [CrossRef]

- Li, C.; Li, Z.; Liu, X.; Li, S. The Influence of Image Degradation on Hyperspectral Image Classification. Remote Sens. 2022, 14, 5199. [Google Scholar] [CrossRef]

- Sobbahi, R.A.; Tekli, J. Comparing deep learning models for low-light natural scene image enhancement and their impact on object detection and classification: Overview, empirical evaluation, and challenges. Signal Process Image Commun. 2022, 109, 116848. [Google Scholar] [CrossRef]

- Li, H.; Zheng, H.; Han, C.; Wang, H.; Miao, M. Onboard Spectral and Spatial Cloud Detection for Hyperspectral Remote Sensing Images. Remote Sens. 2018, 10, 152. [Google Scholar] [CrossRef] [Green Version]

- Yang, Y.; Tao, W.; Huang, J.; Xu, B. Over exposed image information recovery via stochastic resonance. Chin. Phys. B 2012, 21, 305–311. [Google Scholar] [CrossRef]

- Kumar, A.; Jha, R.K.; Nishchal, N.K. Dynamic stochastic resonance and image fusion based model for quality enhancement of dark and hazy images. J. Electron. Imaging 2021, 30, 063008. [Google Scholar] [CrossRef]

- Hu, M.; Mao, J.; Li, J.; Wang, Q.; Zhang, Y. A novel lidar signal denoising method based on convolutional autoencoding deep learning neural network. Atmosphere 2021, 12, 1403. [Google Scholar] [CrossRef]

- Zhu, Q.; Zu, X. Fully Convolutional Neural Network Structure and Its Loss Function for Image Classification. IEEE Access. 2022, 10, 35541–35549. [Google Scholar] [CrossRef]

- Dai, D. An Introduction of CNN: Models and Training on Neural Network Models. In Proceedings of the 2021 International Conference on Big Data, Artificial Intelligence and Risk Management (ICBAR), Shanghai, China, 5–7 November 2021; pp. 135–138. [Google Scholar] [CrossRef]

- Risken, H. The Fokker-Planck Equation: Method of Solutions and Applications, 2nd ed.; Springer Series in Synergetics; Springer: Berlin, Germany, 1989. [Google Scholar]

- Courant, R.; Hilbert, D. Methods of Mathematical of Physics; Interscience Publ. Inc.: New York, NY, USA, 1953; Voluem 1, p. 173. [Google Scholar]

- Lapidus, L.; Pinder, G.F. Numerical Solution of Partial Differential Equations in Science and Engineering; John Wiley and Sons, Inc.: New York, NY, USA, 1982. [Google Scholar]

- Yang, Q.; Ku, T.; Hu, K. Efficient attention pyramid network for semantic segmentation. IEEE Access 2021, 9, 18867–18875. [Google Scholar] [CrossRef]

- Ju, A.; Wang, Z. Convolutional block attention module based on visual mechanism for robot image edge detection. EAI Endorsed Trans. Scalable Inf. Syst. 2018, 9, 172214. [Google Scholar] [CrossRef]

- Liu, X.; Wang, H.; Liu, J.; Sun, S.; Fu, M. HSI Classification Based on Multimodal CNN and Shadow Enhance by DSR Spatial-Spectral Fusion. Can. J. Remote Sens. 2021, 47, 773–789. [Google Scholar] [CrossRef]

- Hu, J.; Shen, L.; Albanie, S.; Sun, G.; Wu, E. Squeeze-and-Excitation Networks. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 42, 1. [Google Scholar]

- Chen, Y.; Xing, M. A global attention-based convolutional neural network for process prediction. In Proceedings of the 2022 41st Chinese Control Conference (CCC), Hefei, China, 25–27 July 2022. [Google Scholar] [CrossRef]

- Li, X.; Xie, M.; Zhang, Y.; Ding, G.; Tong, W. Dual attention convolutional network for action recognition. IET Image Process. 2020, 14, 1059–1065. [Google Scholar] [CrossRef]

- Zhang, Z.; Ding, Y.; Zhao, X.; Siye, L.; Yang, N.; Cai, Y.; Zhan, Y. Multireceptive field: An adaptive path aggregation graph neural framework for hyperspectral image classification. Expert Syst. Appl. 2023, 217, 119508. [Google Scholar] [CrossRef]

| Number | Color | Sample | Label |

|---|---|---|---|

| 1 | 33,184 | Grass | |

| 2 | 10,850 | Tree | |

| 3 | 3376 | Road | |

| 4 | 1686 | Road in shadow | |

| 5 | 323 | Grass in shadow | |

| 6 | 537 | Target 1 | |

| 7 | 514 | Target 2 | |

| 8 | 4135 | Target 3 |

| Layer | Kernel | Kernel Size | Activation | Dropout | ||

|---|---|---|---|---|---|---|

| 3DConv | 8 | 3 × 3 × 3 | Relu | - | ||

| MAM | ECA1 | 1DConv | 1 | p = 3 | Sigmoid | - |

| ECA2 | 1DConv | 1 | p = 3 | Sigmoid | - | |

| CBAM | FC3/FC5 (3DConv) | 8 | 1 × 1 × 1 | Relu | - | |

| FC4/FC6 (3DConv) | 8 | 1 × 1 × 1 | - | - | ||

| 3DConv | 1 | 3 × 3 × 3 | Sigmoid | - | ||

| FC1 | 256 | - | Relu | 0.6 | ||

| FC2 | 128 | - | Relu | 0.5 | ||

| Name | Setting |

|---|---|

| Window size | 11 |

| Test ratio | 0.8 |

| Learning rate | 0.001 |

| Optimizer | Adam |

| Epoch | 100 |

| Loss function | Categorical cross-entropy |

| Data | Original | Enhanced by 1D DSR | Enhanced by 2D DSR |

|---|---|---|---|

| OA | 96.5388 | 97.0277 | 97.3508 |

| AA | 89.8357 | 89.8990 | 91.0473 |

| Kappa | 94.0936 | 94.7531 | 95.4368 |

| Method | 2D CNN | GAB- 2DCNN | MAM- 2DCNN | 3D CNN | GAB- 3DCNN | CBAM- 3DCNN | SE- 3DCNN | DA- 3DCNN | ECA- 3DCNN | DECA- 3DCNN | ECA- CBAM- 3DCNN | MAM- 3DCNN |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Grass | 0.9900 | 0.9900 | 0.9900 | 0.9900 | 0.9900 | 0.9900 | 0.9900 | 0.9900 | 0.9900 | 0.9900 | 0.9900 | 0.9900 |

| Tree | 0.9725 | 0.9700 | 0.9750 | 0.9820 | 0.9840 | 0.9860 | 0.9820 | 0.9800 | 0.9860 | 0.9860 | 0.9855 | 0.9869 |

| Road | 0.9550 | 0.9600 | 0.9645 | 0.9700 | 0.9740 | 0.9720 | 0.9740 | 0.9680 | 0.9680 | 0.9690 | 0.9695 | 0.9700 |

| Road in shadow | 0.7925 | 0.8260 | 0.8320 | 0.8820 | 0.8420 | 0.8620 | 0.8560 | 0.8560 | 0.8520 | 0.8520 | 0.8430 | 0.8538 |

| Grass in shadow | 0.8975 | 0.8960 | 0.9020 | 0.9440 | 0.9220 | 0.9180 | 0.9240 | 0.9160 | 0.9280 | 0.9120 | 0.9120 | 0.9138 |

| Target 1 | 0.8425 | 0.8760 | 0.8946 | 0.8920 | 0.8760 | 0.8940 | 0.8840 | 0.8720 | 0.8840 | 0.8856 | 0.8860 | 0.9008 |

| Target 2 | 0.6625 | 0.6920 | 0.7220 | 0.7180 | 0.6260 | 0.7060 | 0.6340 | 0.6020 | 0.6420 | 0.6650 | 0.7130 | 0.7623 |

| Target 3 | 0.8775 | 0.8520 | 0.8600 | 0.9060 | 0.9320 | 0.9320 | 0.9200 | 0.9180 | 0.9260 | 0.9200 | 0.9230 | 0.9346 |

| OA (%) | 96.4257 | 96.4329 | 96.7200 | 97.3508 | 97.4475 | 97.5317 | 97.3340 | 97.2464 | 97.4746 | 97.5021 | 97.5334 | 97.6698 |

| AA (%) | 87.2958 | 88.3053 | 88.5750 | 91.0473 | 89.3490 | 90.7666 | 89.6266 | 88.7892 | 89.8707 | 89.8806 | 90.1023 | 90.9980 |

| Kappa (%) | 93.8280 | 93.8515 | 94.4820 | 95.4368 | 95.5951 | 95.7482 | 95.4046 | 95.2485 | 95.5757 | 95.5780 | 95.7650 | 95.9789 |

| Evaluation | 3D-GAN | MARP-GNN | MAM-3DCNN | |||

|---|---|---|---|---|---|---|

| HYDICE | 2D DSR | HYDICE | 2D DSR | HYDICE | 2D DSR | |

| OA (%) | 96.22 | 97.02 | 96.44 | 97.38 | 96.83 | 97.67 |

| AA (%) | 87.33 | 90.13 | 88.50 | 90.35 | 89.61 | 91.00 |

| Kappa (%) | 93.49 | 94.36 | 94.55 | 95.13 | 94.52 | 95.98 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Liu, Q.; Fu, M.; Liu, X. Shadow Enhancement Using 2D Dynamic Stochastic Resonance for Hyperspectral Image Classification. Remote Sens. 2023, 15, 1820. https://doi.org/10.3390/rs15071820

Liu Q, Fu M, Liu X. Shadow Enhancement Using 2D Dynamic Stochastic Resonance for Hyperspectral Image Classification. Remote Sensing. 2023; 15(7):1820. https://doi.org/10.3390/rs15071820

Chicago/Turabian StyleLiu, Qiuyue, Min Fu, and Xuefeng Liu. 2023. "Shadow Enhancement Using 2D Dynamic Stochastic Resonance for Hyperspectral Image Classification" Remote Sensing 15, no. 7: 1820. https://doi.org/10.3390/rs15071820