Status of Phenological Research Using Sentinel-2 Data: A Review

Abstract

:1. Introduction

2. Phenology of Forests

2.1. Integration of Sentinel-2 Data with Other Imagery

2.2. Vegetation Indices for Phenological Research in Woody Species

3. Phenology of Croplands

3.1. Mapping of Crops Using Time Series Data

3.2. Estimation of Crop Yield

4. Phenology of Grasslands

4.1. Monitoring Seasonal Change in Grassland to Determine Management Practices, Invasion and Biomass Production

4.2. Matching Sentinel-2 with Phenocam Data in Grasslands

5. Phenology of Other Land Classes

5.1. Mapping of Wetland Vegetation

5.2. Dealing with Mixed Pixels in Urban Areas

6. Current Developments in Using Sentinel-2 for Phenological Research

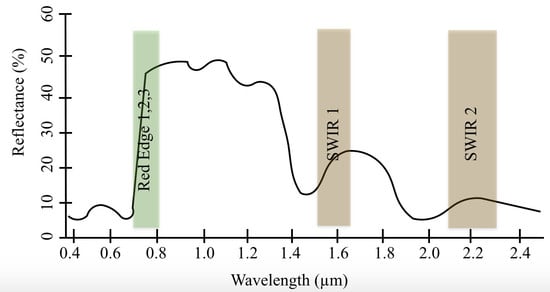

6.1. Performance of Sentinel-2 Red-Edge Bands in Phenological Research

6.2. Overcoming Cloud Cover

6.3. Gap Filling Techniques for Phenological Research Using Sentinel-2

6.4. Further Prospects

7. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Cleland, E.E.; Chuine, I.; Menzel, A.; Mooney, H.A.; Schwartz, M.D. Shifting plant phenology in response to global change. Trends Ecol. Evol. 2007, 22, 357–365. [Google Scholar] [CrossRef] [PubMed]

- Menzel, A.; Fabian, P. Growing season extended in Europe. Nature 1999, 397, 659. [Google Scholar] [CrossRef]

- Thackeray, S.J.; Henrys, P.A.; Hemming, D.; Bell, J.R.; Botham, M.S.; Burthe, S.; Helaouet, P.; Johns, D.G.; Jones, I.D.; Leech, D.I.; et al. Phenological sensitivity to climate across taxa and trophic levels. Nature 2016, 535, 241–245. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Laskin, D.N.; McDermid, G.J.; Nielsen, S.E.; Marshall, S.J.; Roberts, D.R.; Montaghi, A. Advances in phenology are conserved across scale in present and future climates. Nat. Clim. Chang. 2019, 9, 419–425. [Google Scholar] [CrossRef]

- Burgess, M.D.; Smith, K.W.; Evans, K.L.; Leech, D.; Pearce-Higgins, J.W.; Branston, C.J.; Briggs, K.; Clark, J.R.; Du Feu, C.R.; Lewthwaite, K.; et al. Tritrophic phenological match-mismatch in space and time. Nat. Ecol. Evol. 2018, 2, 970–975. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Visser, M.E.; Both, C. Shifts in phenology due to global climate change: The need for a yardstick. Proc. R. Soc. B Biol. Sci. 2005, 272, 2561–2569. [Google Scholar] [CrossRef] [PubMed]

- Jin, J.; Wang, Y.; Zhang, Z.; Magliulo, V.; Jiang, H.; Cheng, M. Phenology Plays an Important Role in the Regulation of Terrestrial Ecosystem Water-Use Efficiency in the Northern Hemisphere. Remote Sens. 2017, 9, 664. [Google Scholar] [CrossRef] [Green Version]

- GEO BON. What are EBVs?—GEO BON. Available online: https://geobon.org/ebvs/what-are-ebvs/ (accessed on 30 June 2020).

- Radeloff, V.C.; Dubinin, M.; Coops, N.C.; Allen, A.M.; Brooks, T.M.; Clayton, M.K.; Costa, G.C.; Graham, C.H.; Helmers, D.P.; Ives, A.R.; et al. The Dynamic Habitat Indices (DHIs) from MODIS and global biodiversity. Remote Sens. Environ. 2019, 222, 204–214. [Google Scholar] [CrossRef]

- Yu, L.; Liu, T.; Bu, K.; Yan, F.; Yang, J.; Chang, L.; Zhang, S. Monitoring the long term vegetation phenology change in Northeast China from 1982 to 2015. Sci. Rep. 2017, 7, 14770. [Google Scholar] [CrossRef] [Green Version]

- Myneni, R.B.; Keeling, C.D.; Tucker, C.J.; Asrar, G.; Nemani, R.R. Increased plant growth in the northern high latitudes from 1981 to 1991. Nature 1997, 386, 698–702. [Google Scholar] [CrossRef]

- Singh, C.P.; Panigrahy, S.; Thapliyal, A.; Kimothi, M.M.; Soni, P.; Parihar, J.S. Monitoring the alpine treeline shift in parts of the Indian Himalayas using remote sensing. Curr. Sci. 2012, 102, 558–562. [Google Scholar]

- Vitasse, Y.; Signarbieux, C.; Fu, Y.H. Global warming leads to more uniform spring phenology across elevations. Proc. Natl. Acad. Sci. USA 2017, 115, 201717342. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Fu, Y.H.; Zhao, H.; Piao, S.; Peaucelle, M.; Peng, S.; Zhou, G.; Ciais, P.; Huang, M.; Menzel, A.; Peñuelas, J.; et al. Declining global warming effects on the phenology of spring leaf unfolding. Nature 2015, 526, 104–107. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Pérez-Pastor, A.; Ruiz-Sánchez, M.C.; Domingo, R.; Torrecillas, A. Growth and phenological stages of Búlida apricot trees in south-east Spain. Agronomie 2004, 24, 93–100. [Google Scholar] [CrossRef] [Green Version]

- Denny, E.G.; Gerst, K.L.; Miller-Rushing, A.J.; Tierney, G.L.; Crimmins, T.M.; Enquist, C.A.F.; Guertin, P.; Rosemartin, A.H.; Schwartz, M.D.; Thomas, K.A.; et al. Standardized phenology monitoring methods to track plant and animal activity for science and resource management applications. Int. J. Biometeorol. 2014, 58, 591–601. [Google Scholar] [CrossRef] [Green Version]

- Beaubien, E.G.; Hamann, A. Plant phenology networks of citizen scientists: Recommendations from two decades of experience in Canada. Int. J. Biometeorol. 2011, 55, 833–841. [Google Scholar] [CrossRef] [PubMed]

- Taylor, S.D.; Meiners, J.M.; Riemer, K.; Orr, M.C.; White, E.P. Comparison of large-scale citizen science data and long-term study data for phenology modeling. Ecology 2019, 100, e02568. [Google Scholar] [CrossRef] [Green Version]

- Richardson, A.D. Tracking seasonal rhythms of plants in diverse ecosystems with digital camera imagery. New Phytol. 2019, 222, 1742–1750. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Sonnentag, O.; Hufkens, K.; Teshera-Sterne, C.; Young, A.M.; Friedl, M.; Braswell, B.H.; Milliman, T.; O’Keefe, J.; Richardson, A.D. Digital repeat photography for phenological research in forest ecosystems. Agric. For. Meteorol. 2012, 152, 159–177. [Google Scholar] [CrossRef]

- Vrieling, A.; Meroni, M.; Darvishzadeh, R.; Skidmore, A.K.; Wang, T.; Zurita-Milla, R.; Oosterbeek, K.; O’Connor, B.; Paganini, M. Vegetation phenology from Sentinel-2 and field cameras for a Dutch barrier island. Remote Sens. Environ. 2018, 215, 517–529. [Google Scholar] [CrossRef]

- Frantz, D.; Stellmes, M.; Röder, A.; Udelhoven, T.; Mader, S.; Hill, J. Improving the Spatial Resolution of Land Surface Phenology by Fusing Medium- and Coarse-Resolution Inputs. IEEE Trans. Geosci. Remote Sens. 2016, 54, 4153–4164. [Google Scholar] [CrossRef]

- Xin, Q.; Olofsson, P.; Zhu, Z.; Tan, B.; Woodcock, C.E. Toward near real-time monitoring of forest disturbance by fusion of MODIS and Landsat data. Remote Sens. Environ. 2013, 135, 234–247. [Google Scholar] [CrossRef]

- Tian, F.; Wang, Y.; Fensholt, R.; Wang, K.; Zhang, L.; Huang, Y. Mapping and evaluation of NDVI trends from synthetic time series obtained by blending landsat and MODIS data around a coalfield on the loess plateau. Remote Sens. 2013, 5, 4255–4279. [Google Scholar] [CrossRef] [Green Version]

- Walker, J.J.; de Beurs, K.M.; Wynne, R.H. Dryland vegetation phenology across an elevation gradient in Arizona, USA, investigated with fused MODIS and landsat data. Remote Sens. Environ. 2014, 144, 85–97. [Google Scholar] [CrossRef]

- Hanes, J.M.; Liang, L.; Morisette, J.T. Land Surface Phenology. In Biophysical Applications of Satellite Remote Sensing; Springer: Berlin/Heidelberg, Germany, 2014; p. 236. ISBN 978-3-642-25046-0. [Google Scholar]

- Henebry, G.M.; de Beurs, K.M. Remote Sensing of Land Surface Phenology: A Prospectus. In Phenology: An Integrative Environmental Science; Springer: Dordrecht, The Netherlands, 2013; pp. 385–411. [Google Scholar]

- Duncan, J.M.A.; Dash, J.; Atkinson, P.M. The potential of satellite-observed crop phenology to enhance yield gap assessments in smallholder landscapes. Front. Environ. Sci. 2015, 3, 56. [Google Scholar] [CrossRef] [Green Version]

- White, M.A.; de Beurs, K.M.; Didan, K.; Inouye, D.W.; Richardson, A.D.; Jensen, O.P.; O’Keefe, J.; Zhang, G.; Nemani, R.R.; van Leeuwen, W.J.D.; et al. Intercomparison, interpretation, and assessment of spring phenology in North America estimated from remote sensing for 1982–2006. Glob. Chang. Biol. 2009, 15, 2335–2359. [Google Scholar] [CrossRef]

- Misra, G.; Buras, A.; Menzel, A. Effects of Different Methods on the Comparison between Land Surface and Ground Phenology—A Methodological Case Study from South-Western Germany. Remote Sens. 2016, 8, 753. [Google Scholar] [CrossRef] [Green Version]

- Atkinson, P.M.; Jeganathan, C.; Dash, J.; Atzberger, C. Inter-comparison of four models for smoothing satellite sensor time-series data to estimate vegetation phenology. Remote Sens. Environ. 2012, 123, 400–417. [Google Scholar] [CrossRef]

- Gómez-Giráldez, P.J.; Pérez-Palazón, M.J.; Polo, M.J.; González-Dugo, M.P. Monitoring Grass Phenology and Hydrological Dynamics of an Oak–Grass Savanna Ecosystem Using Sentinel-2 and Terrestrial Photography. Remote Sens. 2020, 12, 600. [Google Scholar]

- Lin, S.; Li, J.; Liu, Q.; Li, L.; Zhao, J.; Yu, W. Evaluating the effectiveness of using vegetation indices based on red-edge reflectance from Sentinel-2 to estimate gross primary productivity. Remote Sens. 2019, 11, 1303. [Google Scholar] [CrossRef] [Green Version]

- Xue, J.; Su, B. Significant Remote Sensing Vegetation Indices: A Review of Developments and Applications. J. Sens. 2017, 2017, 1–17. [Google Scholar] [CrossRef] [Green Version]

- Tan, B.; Morisette, J.T.; Wolfe, R.E.; Gao, F.; Ederer, G.A.; Nightingale, J.; Pedelty, J.A. An Enhanced TIMESAT Algorithm for Estimating Vegetation Phenology Metrics from MODIS Data. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2011, 4, 361–371. [Google Scholar] [CrossRef]

- Fisher, J.I.; Mustard, J.F. Cross-scalar satellite phenology from ground, Landsat, and MODIS data. Remote Sens. Environ. 2007, 109, 261–273. [Google Scholar] [CrossRef]

- Fisher, J.I.; Mustard, J.F.; Vadeboncoeur, M.A. Green leaf phenology at Landsat resolution: Scaling from the field to the satellite. Remote Sens. Environ. 2006, 100, 265–279. [Google Scholar] [CrossRef]

- Rodriguez-Galiano, V.F.; Dash, J.; Atkinson, P.M. Characterising the land surface phenology of Europe using decadal MERIS data. Remote Sens. 2015, 7, 9390–9409. [Google Scholar] [CrossRef] [Green Version]

- Rodriguez-Galiano, V.F.; Dash, J.; Atkinson, P.M. Intercomparison of satellite sensor land surface phenology and ground phenology in Europe. Geophys. Res. Lett. 2015, 42, 2253–2260. [Google Scholar] [CrossRef] [Green Version]

- Garonna, I.; de Jong, R.; de Wit, A.J.W.; Mücher, C.A.; Schmid, B.; Schaepman, M.E. Strong contribution of autumn phenology to changes in satellite-derived growing season length estimates across Europe (1982–2011). Glob. Chang. Biol. 2014, 20, 3457–3470. [Google Scholar] [CrossRef]

- Melaas, E.K.; Sulla-Menashe, D.; Gray, J.M.; Black, T.A.; Morin, T.H.; Richardson, A.D.; Friedl, M.A. Multisite analysis of land surface phenology in North American temperate and boreal deciduous forests from Landsat. Remote Sens. Environ. 2016, 186, 452–464. [Google Scholar] [CrossRef]

- Bolton, D.K.; Gray, J.M.; Melaas, E.K.; Moon, M.; Eklundh, L.; Friedl, M.A. Continental-scale land surface phenology from harmonized Landsat 8 and Sentinel-2 imagery. Remote Sens. Environ. 2020, 240, 111685. [Google Scholar] [CrossRef]

- Zhu, Z.; Wulder, M.A.; Roy, D.P.; Woodcock, C.E.; Hansen, M.C.; Radeloff, V.C.; Healey, S.P.; Schaaf, C.; Hostert, P.; Strobl, P.; et al. Benefits of the free and open Landsat data policy. Remote Sens. Environ. 2019, 224, 382–385. [Google Scholar] [CrossRef]

- Misra, G.; Buras, A.; Heurich, M.; Asam, S.; Menzel, A. LiDAR derived topography and forest stand characteristics largely explain the spatial variability observed in MODIS land surface phenology. Remote Sens. Environ. 2018, 218, 231–244. [Google Scholar] [CrossRef]

- Helman, D. Land surface phenology: What do we really ‘see’ from space? Sci. Total Environ. 2018, 618, 665–673. [Google Scholar] [CrossRef] [PubMed]

- Chen, X.; Wang, D.; Chen, J.; Wang, C.; Shen, M. The mixed pixel effect in land surface phenology: A simulation study. Remote Sens. Environ. 2018, 211, 338–344. [Google Scholar] [CrossRef]

- Tian, J.; Zhu, X.; Wu, J.; Shen, M.; Chen, J. Coarse-Resolution Satellite Images Overestimate Urbanization Effects on Vegetation Spring Phenology. Remote Sens. 2020, 12, 117. [Google Scholar] [CrossRef] [Green Version]

- Granero-Belinchon, C.; Adeline, K.; Lemonsu, A.; Briottet, X. Phenological Dynamics Characterization of Alignment Trees with Sentinel-2 Imagery: A Vegetation Indices Time Series Reconstruction Methodology Adapted to Urban Areas. Remote Sens. 2020, 12, 639. [Google Scholar] [CrossRef] [Green Version]

- Landsat 9 «Landsat Science». Available online: https://landsat.gsfc.nasa.gov/landsat-9/ (accessed on 4 August 2020).

- User Guides—Sentinel-2 MSI—Sentinel Online. Available online: https://sentinel.esa.int/web/sentinel/user-guides/sentinel-2-msi;jsessionid=1ADA9B2E6C21CAC7C8A41E91F51B041C.jvm2 (accessed on 9 August 2020).

- Immitzer, M.; Vuolo, F.; Atzberger, C. First experience with Sentinel-2 data for crop and tree species classifications in central Europe. Remote Sens. 2016, 8, 166. [Google Scholar] [CrossRef]

- Addabbo, P.; Focareta, M.; Marcuccio, S.; Votto, C.; Ullo, S.L. Contribution of Sentinel-2 data for applications in vegetation monitoring. Acta IMEKO 2016, 5, 44. [Google Scholar] [CrossRef]

- Griffiths, P.; Nendel, C.; Hostert, P. Intra-annual reflectance composites from Sentinel-2 and Landsat for national-scale crop and land cover mapping. Remote Sens. Environ. 2019, 220, 135–151. [Google Scholar] [CrossRef]

- Frampton, W.J.; Dash, J.; Watmough, G.; Milton, E.J. Evaluating the capabilities of Sentinel-2 for quantitative estimation of biophysical variables in vegetation. ISPRS J. Photogramm. Remote Sens. 2013, 82, 83–92. [Google Scholar] [CrossRef] [Green Version]

- Hill, M.J. Vegetation index suites as indicators of vegetation state in grassland and savanna: An analysis with simulated SENTINEL 2 data for a North American transect. Remote Sens. Environ. 2013, 137, 94–111. [Google Scholar] [CrossRef]

- Veloso, A.; Mermoz, S.; Bouvet, A.; Le Toan, T.; Planells, M.; Dejoux, J.F.; Ceschia, E. Understanding the temporal behavior of crops using Sentinel-1 and Sentinel-2-like data for agricultural applications. Remote Sens. Environ. 2017, 199, 415–426. [Google Scholar] [CrossRef]

- Lessio, A.; Fissore, V.; Borgogno-Mondino, E. Preliminary Tests and Results Concerning Integration of Sentinel-2 and Landsat-8 OLI for Crop Monitoring. J. Imaging 2017, 3, 49. [Google Scholar] [CrossRef] [Green Version]

- Delegido, J.; Verrelst, J.; Alonso, L.; Moreno, J. Evaluation of sentinel-2 red-edge bands for empirical estimation of green LAI and chlorophyll content. Sensors 2011, 11, 7063–7081. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Astola, H.; Häme, T.; Sirro, L.; Molinier, M.; Kilpi, J. Comparison of Sentinel-2 and Landsat 8 imagery for forest variable prediction in boreal region. Remote Sens. Environ. 2019, 223, 257–273. [Google Scholar] [CrossRef]

- Lange, M.; Dechant, B.; Rebmann, C.; Vohland, M.; Cuntz, M.; Doktor, D. Validating MODIS and Sentinel-2 NDVI Products at a Temperate Deciduous Forest Site Using Two Independent Ground-Based Sensors. Sensors 2017, 17, 1855. [Google Scholar] [CrossRef] [Green Version]

- Zhang, X.; Wang, J.; Henebry, G.M.; Gao, F. Development and evaluation of a new algorithm for detecting 30 m land surface phenology from VIIRS and HLS time series. ISPRS J. Photogramm. Remote Sens. 2020, 161, 37–51. [Google Scholar] [CrossRef]

- Pastick, N.J.; Wylie, B.K.; Wu, Z. Spatiotemporal analysis of Landsat-8 and Sentinel-2 data to support monitoring of dryland ecosystems. Remote Sens. 2018, 10, 791. [Google Scholar] [CrossRef] [Green Version]

- Grabska, E.; Hostert, P.; Pflugmacher, D.; Ostapowicz, K. Forest stand species mapping using the sentinel-2 time series. Remote Sens. 2019, 11, 1197. [Google Scholar] [CrossRef] [Green Version]

- Hościło, A.; Lewandowska, A. Mapping Forest Type and Tree Species on a Regional Scale Using Multi-Temporal Sentinel-2 Data. Remote Sens. 2019, 11, 929. [Google Scholar] [CrossRef] [Green Version]

- Jönsson, P.; Cai, Z.; Melaas, E.; Friedl, M.; Eklundh, L. A Method for Robust Estimation of Vegetation Seasonality from Landsat and Sentinel-2 Time Series Data. Remote Sens. 2018, 10, 635. [Google Scholar] [CrossRef] [Green Version]

- Puletti, N.; Chianucci, F.; Castaldi, C. Use of Sentinel-2 for forest classification in Mediterranean environments. Ann. Silvic. Res. 2018, 42, 32–38. [Google Scholar]

- Marzialetti, F.; Giulio, S.; Malavasi, M.; Sperandii, M.G.; Acosta, A.T.R.; Carranza, M.L. Capturing Coastal Dune Natural Vegetation Types Using a Phenology-Based Mapping Approach: The Potential of Sentinel-2. Remote Sens. 2019, 11, 1506. [Google Scholar] [CrossRef] [Green Version]

- Zhang, H.K.; Roy, D.P.; Yan, L.; Li, Z.; Huang, H.; Vermote, E.; Skakun, S.; Roger, J.-C. Characterization of Sentinel-2A and Landsat-8 top of atmosphere, surface, and nadir BRDF adjusted reflectance and NDVI differences. Remote Sens. Environ. 2018, 215, 482–494. [Google Scholar] [CrossRef]

- Franch, B.; Vermote, E.; Skakun, S.; Roger, J.-C.; Masek, J.; Ju, J.; Villaescusa-Nadal, J.; Santamaria-Artigas, A. A Method for Landsat and Sentinel 2 (HLS) BRDF Normalization. Remote Sens. 2019, 11, 632. [Google Scholar] [CrossRef] [Green Version]

- Claverie, M.; Ju, J.; Masek, J.G.; Dungan, J.L.; Vermote, E.F.; Roger, J.C.; Skakun, S.V.; Justice, C. The Harmonized Landsat and Sentinel-2 surface reflectance data set. Remote Sens. Environ. 2018, 219, 145–161. [Google Scholar] [CrossRef]

- Roy, D.P.; Ju, J.; Lewis, P.; Schaaf, C.; Gao, F.; Hansen, M.; Lindquist, E. Multi-temporal MODIS-Landsat data fusion for relative radiometric normalization, gap filling, and prediction of Landsat data. Remote Sens. Environ. 2008, 112, 3112–3130. [Google Scholar] [CrossRef]

- Shuai, Y.; Masek, J.G.; Gao, F.; Schaaf, C.B. An algorithm for the retrieval of 30-m snow-free albedo from Landsat surface reflectance and MODIS BRDF. Remote Sens. Environ. 2011, 115, 2204–2216. [Google Scholar] [CrossRef]

- Chen, B.; Jin, Y.; Brown, P. An enhanced bloom index for quantifying floral phenology using multi-scale remote sensing observations. ISPRS J. Photogramm. Remote Sens. 2019, 156, 108–120. [Google Scholar] [CrossRef]

- d’Andrimont, R.; Taymans, M.; Lemoine, G.; Ceglar, A.; Yordanov, M.; van der Velde, M. Detecting flowering phenology in oil seed rape parcels with Sentinel-1 and -2 time series. Remote Sens. Environ. 2020, 239, 111660. [Google Scholar] [CrossRef]

- Ji, C.; Li, X.; Wei, H.; Li, S. Comparison of Different Multispectral Sensors for Photosynthetic and Non-Photosynthetic Vegetation-Fraction Retrieval. Remote Sens. 2020, 12, 115. [Google Scholar] [CrossRef] [Green Version]

- Guerschman, J.P.; Scarth, P.F.; McVicar, T.R.; Renzullo, L.J.; Malthus, T.J.; Stewart, J.B.; Rickards, J.E.; Trevithick, R. Assessing the effects of site heterogeneity and soil properties when unmixing photosynthetic vegetation, non-photosynthetic vegetation and bare soil fractions from Landsat and MODIS data. Remote Sens. Environ. 2015, 161, 12–26. [Google Scholar] [CrossRef]

- Li, X.; Zheng, G.; Wang, J.; Ji, C.; Sun, B.; Gao, Z. Comparison of methods for estimating fractional cover of photosynthetic and non-photosynthetic vegetation in the otindag sandy land using GF-1 wide-field view data. Remote Sens. 2016, 8, 800. [Google Scholar] [CrossRef] [Green Version]

- Roberts, D.A.; Smith, M.O.; Adams, J.B. Green vegetation, nonphotosynthetic vegetation, and soils in AVIRIS data. Remote Sens. Environ. 1993, 44, 255–269. [Google Scholar] [CrossRef]

- Hallik, L.; Kazantsev, T.; Kuusk, A.; Galmés, J.; Tomás, M.; Niinemets, Ü. Generality of relationships between leaf pigment contents and spectral vegetation indices in Mallorca (Spain). Reg. Environ. Chang. 2017, 17, 2097–2109. [Google Scholar] [CrossRef]

- Gamon, J.A.; Huemmrich, K.F.; Wong, C.Y.S.; Ensminger, I.; Garrity, S.; Hollinger, D.Y.; Noormets, A.; Peñuelas, J. A remotely sensed pigment index reveals photosynthetic phenology in evergreen conifers. Proc. Natl. Acad. Sci. USA 2016, 113, 13087–13092. [Google Scholar] [CrossRef] [Green Version]

- Flynn, K.C.; Frazier, A.E.; Admas, S. Performance of chlorophyll prediction indices for Eragrostis tef at Sentinel-2 MSI and Landsat-8 OLI spectral resolutions. Precis. Agric. 2020, 21, 1057–1071. [Google Scholar] [CrossRef]

- Landmann, T.; Piiroinen, R.; Makori, D.M.; Abdel-Rahman, E.M.; Makau, S.; Pellikka, P.; Raina, S.K. Application of hyperspectral remote sensing for flower mapping in African savannas. Remote Sens. Environ. 2015, 166, 50–60. [Google Scholar] [CrossRef]

- Campbell, T.; Fearns, P. Simple remote sensing detection of Corymbia calophylla flowers using common 3 –band imaging sensors. Remote Sens. Appl. Soc. Environ. 2018, 11, 51–63. [Google Scholar] [CrossRef]

- Stuckens, J.; Dzikiti, S.; Verstraeten, W.W.; Verreynne, S.; Swennen, R.; Coppin, P. Physiological interpretation of a hyperspectral time series in a citrus orchard. Agric. For. Meteorol. 2011, 151, 1002–1015. [Google Scholar] [CrossRef]

- Vanbrabant, Y.; Delalieux, S.; Tits, L.; Pauly, K.; Vandermaesen, J.; Somers, B. Pear flower cluster quantification using RGB drone imagery. Agronomy 2020, 10, 407. [Google Scholar] [CrossRef] [Green Version]

- López-Granados, F.; Torres-Sánchez, J.; Jiménez-Brenes, F.M.; Arquero, O.; Lovera, M.; De Castro, A.I. An efficient RGB-UAV-based platform for field almond tree phenotyping: 3-D architecture and flowering traits. Plant Methods 2019, 15, 160. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Mutowo, G.; Mutanga, O.; Masocha, M. Remote estimation of nitrogen is more accurate at the start of the growing season when compared with end of the growing season in miombo woodlands. Remote Sens. Appl. Soc. Environ. 2020, 17, 100285. [Google Scholar] [CrossRef]

- Yang, H.; Yang, X.; Heskel, M.; Sun, S.; Tang, J. Seasonal variations of leaf and canopy properties tracked by ground-based NDVI imagery in a temperate forest. Sci. Rep. 2017, 7, 1267. [Google Scholar] [CrossRef] [PubMed]

- Cerasoli, S.; Campagnolo, M.; Faria, J.; Nogueira, C.; Caldeira, M.d.C. On estimating the gross primary productivity of Mediterranean grasslands under different fertilization regimes using vegetation indices and hyperspectral reflectance. Biogeosciences 2018, 15, 5455–5471. [Google Scholar] [CrossRef] [Green Version]

- Prey, L.; Schmidhalter, U. Simulation of satellite reflectance data using high-frequency ground based hyperspectral canopy measurements for in-season estimation of grain yield and grain nitrogen status in winter wheat. ISPRS J. Photogramm. Remote Sens. 2019, 149, 176–187. [Google Scholar] [CrossRef]

- Palchowdhuri, Y.; Valcarce-Diñeiro, R.; King, P.; Sanabria-Soto, M. Classification of multi-temporal spectral indices for crop type mapping: A case study in Coalville, UK. J. Agric. Sci. 2018, 156, 24–36. [Google Scholar] [CrossRef]

- Tian, H.; Huang, N.; Niu, Z.; Qin, Y.; Pei, J.; Wang, J. Mapping Winter Crops in China with Multi-Source Satellite Imagery and Phenology-Based Algorithm. Remote Sens. 2019, 11, 820. [Google Scholar] [CrossRef] [Green Version]

- Mohammed, I.; Marshall, M.; de Bie, K.; Estes, L.; Nelson, A. A blended census and multiscale remote sensing approach to probabilistic cropland mapping in complex landscapes. ISPRS J. Photogramm. Remote Sens. 2020, 161, 233–245. [Google Scholar] [CrossRef]

- Son, N.-T.; Chen, C.-F.; Chen, C.-R.; Guo, H.-Y. Classification of multitemporal Sentinel-2 data for field-level monitoring of rice cropping practices in Taiwan. Adv. Space Res. 2020, 65, 1910–1921. [Google Scholar] [CrossRef]

- Liu, L.; Xiao, X.; Qin, Y.; Wang, J.; Xu, X.; Hu, Y.; Qiao, Z. Mapping cropping intensity in China using time series Landsat and Sentinel-2 images and Google Earth Engine. Remote Sens. Environ. 2020, 239, 111624. [Google Scholar] [CrossRef]

- Pringle, M.J.; Schmidt, M.; Tindall, D.R. Multi-decade, multi-sensor time-series modelling—based on geostatistical concepts—to predict broad groups of crops. Remote Sens. Environ. 2018, 216, 183–200. [Google Scholar] [CrossRef]

- Kussul, N.; Mykola, L.; Shelestov, A.; Skakun, S. Crop inventory at regional scale in Ukraine: Developing in season and end of season crop maps with multi-temporal optical and SAR satellite imagery. Eur. J. Remote Sens. 2018, 51, 627–636. [Google Scholar] [CrossRef] [Green Version]

- Sun, C.; Bian, Y.; Zhou, T.; Pan, J. Using of Multi-Source and Multi-Temporal Remote Sensing Data Improves Crop-Type Mapping in the Subtropical Agriculture Region. Sensors 2019, 19, 2401. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Sitokonstantinou, V.; Papoutsis, I.; Kontoes, C.; Arnal, A.; Andrés, A.P.; Zurbano, J.A. Scalable Parcel-Based Crop Identification Scheme Using Sentinel-2 Data Time-Series for the Monitoring of the Common Agricultural Policy. Remote Sens. 2018, 10, 911. [Google Scholar] [CrossRef] [Green Version]

- Wang, M.; Liu, Z.; Ali Baig, M.H.; Wang, Y.; Li, Y.; Chen, Y. Mapping sugarcane in complex landscapes by integrating multi-temporal Sentinel-2 images and machine learning algorithms. Land Use Policy 2019, 88, 104190. [Google Scholar] [CrossRef]

- Ottosen, T.-B.; Lommen, S.T.E.; Skjøth, C.A. Remote sensing of cropping practice in Northern Italy using time-series from Sentinel-2. Comput. Electron. Agric. 2019, 157, 232–238. [Google Scholar] [CrossRef] [Green Version]

- Nguyen, L.H.; Henebry, G.M. Characterizing Land Use/Land Cover Using Multi-Sensor Time Series from the Perspective of Land Surface Phenology. Remote Sens. 2019, 11, 1677. [Google Scholar] [CrossRef] [Green Version]

- Jiang, Z.; Huete, A.; Didan, K.; Miura, T. Development of a two-band enhanced vegetation index without a blue band. Remote Sens. Environ. 2008, 112, 3833–3845. [Google Scholar] [CrossRef]

- Moeini Rad, A.; Ashourloo, D.; Salehi Shahrabi, H.; Nematollahi, H. Developing an Automatic Phenology-Based Algorithm for Rice Detection Using Sentinel-2 Time-Series Data. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2019, 12, 1471–1481. [Google Scholar] [CrossRef]

- Ayu Purnamasari, R.; Noguchi, R.; Ahamed, T. Land suitability assessments for yield prediction of cassava using geospatial fuzzy expert systems and remote sensing. Comput. Electron. Agric. 2019, 166, 105018. [Google Scholar] [CrossRef]

- Nasrallah, A.; Baghdadi, N.; Mhawej, M.; Faour, G.; Darwish, T.; Belhouchette, H.; Darwich, S. A Novel Approach for Mapping Wheat Areas Using High Resolution Sentinel-2 Images. Sensors 2018, 18, 2089. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Huang, J.; Wang, X.; Li, X.; Tian, H.; Pan, Z. Remotely Sensed Rice Yield Prediction Using Multi-Temporal NDVI Data Derived from NOAA’s-AVHRR. PLoS ONE 2013, 8, e70816. [Google Scholar] [CrossRef] [PubMed]

- Wall, L.; Larocque, D.; Léger, P.M. The early explanatory power of NDVI in crop yield modelling. Int. J. Remote Sens. 2008, 29, 2211–2225. [Google Scholar] [CrossRef]

- Watson, C.J.; Restrepo-Coupe, N.; Huete, A.R. Multi-scale phenology of temperate grasslands: Improving monitoring and management with near-surface phenocams. Front. Environ. Sci. 2019, 7, 14. [Google Scholar] [CrossRef]

- Wang, J.; Xiao, X.; Bajgain, R.; Starks, P.; Steiner, J.; Doughty, R.B.; Chang, Q. Estimating leaf area index and aboveground biomass of grazing pastures using Sentinel-1, Sentinel-2 and Landsat images. ISPRS J. Photogramm. Remote Sens. 2019, 154, 189–201. [Google Scholar] [CrossRef] [Green Version]

- Griffiths, P.; Nendel, C.; Pickert, J.; Hostert, P. Towards national-scale characterization of grassland use intensity from integrated Sentinel-2 and Landsat time series. Remote Sens. Environ. 2020, 238, 111124. [Google Scholar] [CrossRef]

- Pastick, N.J.; Dahal, D.; Wylie, B.K.; Parajuli, S.; Boyte, S.P.; Wu, Z. Characterizing Land Surface Phenology and Exotic Annual Grasses in Dryland Ecosystems Using Landsat and Sentinel-2 Data in Harmony. Remote Sens. 2020, 12, 725. [Google Scholar] [CrossRef] [Green Version]

- Bradley, B.A. Remote detection of invasive plants: A review of spectral, textural and phenological approaches. Biol. Invasions 2014, 16, 1411–1425. [Google Scholar] [CrossRef]

- Richardson, A.D. PhenoCam-About. Available online: https://phenocam.sr.unh.edu/webcam/about/ (accessed on 9 August 2020).

- Hufkens, K.; Keenan, T.F.; Flanagan, L.B.; Scott, R.L.; Bernacchi, C.J.; Joo, E.; Brunsell, N.A.; Verfaillie, J.; Richardson, A.D. Productivity of North American grasslands is increased under future climate scenarios despite rising aridity. Nat. Clim. Chang. 2016, 6, 710–714. [Google Scholar] [CrossRef] [Green Version]

- Richardson, A.D.; Hufkens, K.; Li, X.; Ault, T.R. Testing Hopkins’ Bioclimatic Law with PhenoCam data. Appl. Plant Sci. 2019, 7, e01228. [Google Scholar] [CrossRef] [Green Version]

- Richardson, A.D.; Hufkens, K.; Milliman, T.; Frolking, S. Intercomparison of phenological transition dates derived from the PhenoCam Dataset V1.0 and MODIS satellite remote sensing. Sci. Rep. 2018, 8, 5679. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Filippa, G.; Cremonese, E.; Migliavacca, M.; Galvagno, M.; Sonnentag, O.; Humphreys, E.; Hufkens, K.; Ryu, Y.; Verfaillie, J.; Morra di Cella, U.; et al. NDVI derived from near-infrared-enabled digital cameras: Applicability across different plant functional types. Agric. For. Meteorol. 2018, 249, 275–285. [Google Scholar] [CrossRef]

- Browning, D.M.; Karl, J.W.; Morin, D.; Richardson, A.D.; Tweedie, C.E. Phenocams bridge the gap between field and satellite observations in an arid grassland. Remote Sens. 2017, 9, 1071. [Google Scholar] [CrossRef] [Green Version]

- Zhou, Q.; Rover, J.; Brown, J.; Worstell, B.; Howard, D.; Wu, Z.; Gallant, A.; Rundquist, B.; Burke, M. Monitoring Landscape Dynamics in Central U.S. Grasslands with Harmonized Landsat-8 and Sentinel-2 Time Series Data. Remote Sens. 2019, 11, 328. [Google Scholar] [CrossRef] [Green Version]

- Stendardi, L.; Karlsen, S.R.; Niedrist, G.; Gerdol, R.; Zebisch, M.; Rossi, M.; Notarnicola, C. Exploiting time series of Sentinel-1 and Sentinel-2 imagery to detect meadow phenology in mountain regions. Remote Sens. 2019, 11, 542. [Google Scholar] [CrossRef] [Green Version]

- Jones, M.O.; Jones, L.A.; Kimball, J.S.; McDonald, K.C. Satellite passive microwave remote sensing for monitoring global land surface phenology. Remote Sens. Environ. 2011, 115, 1102–1114. [Google Scholar] [CrossRef]

- Rupasinghe, P.A.; Chow-Fraser, P. Identification of most spectrally distinguishable phenological stage of invasive Phramites australis in Lake Erie wetlands (Canada) for accurate mapping using multispectral satellite imagery. Wetl. Ecol. Manag. 2019, 27, 513–538. [Google Scholar] [CrossRef]

- Mahdianpari, M.; Salehi, B.; Mohammadimanesh, F.; Homayouni, S.; Gill, E. The first wetland inventory map of newfoundland at a spatial resolution of 10 m using sentinel-1 and sentinel-2 data on the Google Earth Engine cloud computing platform. Remote Sens. 2019, 11, 43. [Google Scholar] [CrossRef] [Green Version]

- Cai, Y.; Li, X.; Zhang, M.; Lin, H. Mapping wetland using the object-based stacked generalization method based on multi-temporal optical and SAR data. Int. J. Appl. Earth Obs. Geoinf. 2020, 92, 102164. [Google Scholar] [CrossRef]

- Zipper, S.C.; Schatz, J.; Singh, A.; Kucharik, C.J.; Townsend, P.A.; Loheide, S.P. Urban heat island impacts on plant phenology: Intra-urban variability and response to land cover. Environ. Res. Lett. 2016, 11, 054023. [Google Scholar] [CrossRef]

- Zhang, X.; Wang, J.; Gao, F.; Liu, Y.; Schaaf, C.; Friedl, M.; Yu, Y.; Jayavelu, S.; Gray, J.; Liu, L.; et al. Exploration of scaling effects on coarse resolution land surface phenology. Remote Sens. Environ. 2017, 190, 318–330. [Google Scholar] [CrossRef] [Green Version]

- Persson, M.; Lindberg, E.; Reese, H. Tree Species Classification with Multi-Temporal Sentinel-2 Data. Remote Sens. 2018, 10, 1794. [Google Scholar] [CrossRef] [Green Version]

- Mutanga, O.; Shoko, C. Monitoring the spatio-temporal variations of C3/C4 grass species using multispectral satellite data. In Proceedings of the International Geoscience and Remote Sensing Symposium (IGARSS), Valencia, Spain, 22–27 July 2018; pp. 8988–8991. [Google Scholar]

- Macedo, L.; Kawakubo, F.S. Temporal analysis of vegetation indices related to biophysical parameters using Sentinel 2A images to estimate maize production. In Proceedings of the Remote Sensing for Agriculture, Ecosystems, and Hydrology XIX, Warsaw, Poland, 11–14 September 2017; p. 83. [Google Scholar]

- Harris, A.; Dash, J. The potential of the MERIS Terrestrial Chlorophyll Index for carbon flux estimation. Remote Sens. Environ. 2010, 114, 1856–1862. [Google Scholar] [CrossRef] [Green Version]

- Pinheiro, H.S.K.; Barbosa, T.P.R.; Antunes, M.A.H.; de Carvalho, D.C.; Nummer, A.R.; de Carvalho, W.; Chagas, C.d.S.; Fernandes-Filho, E.I.; Pereira, M.G. Assessment of phytoecological variability by red-edge spectral indices and soil-landscape relationships. Remote Sens. 2019, 11, 2448. [Google Scholar] [CrossRef] [Green Version]

- Clevers, J.G.P.W.; Gitelson, A.A. Remote estimation of crop and grass chlorophyll and nitrogen content using red-edge bands on sentinel-2 and-3. Int. J. Appl. Earth Obs. Geoinf. 2013, 23, 344–351. [Google Scholar] [CrossRef]

- Peng, Y.; Nguy-Robertson, A.; Arkebauer, T.; Gitelson, A. Assessment of Canopy Chlorophyll Content Retrieval in Maize and Soybean: Implications of Hysteresis on the Development of Generic Algorithms. Remote Sens. 2017, 9, 226. [Google Scholar] [CrossRef] [Green Version]

- Arroyo-Mora, J.P.; Kalacska, M.; Soffer, R.; Ifimov, G.; Leblanc, G.; Schaaf, E.S.; Lucanus, O. Evaluation of phenospectral dynamics with Sentinel-2A using a bottom-up approach in a northern ombrotrophic peatland. Remote Sens. Environ. 2018, 216, 544–560. [Google Scholar] [CrossRef]

- Zarco-Tejada, P.J.; Hornero, A.; Hernández-Clemente, R.; Beck, P.S.A. Understanding the temporal dimension of the red-edge spectral region for forest decline detection using high-resolution hyperspectral and Sentinel-2a imagery. ISPRS J. Photogramm. Remote Sens. 2018, 137, 134–148. [Google Scholar] [CrossRef]

- Clevers, J.G.P.W.; Kooistra, L.; van den Brande, M.M.M. Using Sentinel-2 data for retrieving LAI and leaf and canopy chlorophyll content of a potato crop. Remote Sens. 2017, 9, 405. [Google Scholar] [CrossRef] [Green Version]

- Villa, P.; Pinardi, M.; Bolpagni, R.; Gillier, J.-M.; Zinke, P.; Nedelcuţ, F.; Bresciani, M. Assessing macrophyte seasonal dynamics using dense time series of medium resolution satellite data. Remote Sens. Environ. 2018, 216, 230–244. [Google Scholar] [CrossRef]

- Gao, F.; Anderson, M.; Daughtry, C.; Karnieli, A.; Hively, D.; Kustas, W. A within-season approach for detecting early growth stages in corn and soybean using high temporal and spatial resolution imagery. Remote Sens. Environ. 2020, 242, 111752. [Google Scholar] [CrossRef]

- Manivasagam, V.S.; Kaplan, G.; Rozenstein, O. Developing transformation functions for VENμS and Sentinel-2 surface reflectance over Israel. Remote Sens. 2019, 11, 1710. [Google Scholar] [CrossRef] [Green Version]

- Li, N.; Zhang, D.; Li, L.; Zhang, Y. Mapping the spatial distribution of tea plantations using high-spatiotemporal-resolution imagery in northern Zhejiang, China. Forests 2019, 10, 856. [Google Scholar] [CrossRef] [Green Version]

- Shang, R.; Liu, R.; Xu, M.; Liu, Y.; Dash, J.; Ge, Q. Determining the start of the growing season from MODIS data in the Indian Monsoon Region: Identifying available data in the rainy season and modeling the varied vegetation growth trajectories. Remote Sens. 2018, 10, 122. [Google Scholar] [CrossRef] [Green Version]

- Baetens, L.; Desjardins, C.; Hagolle, O. Validation of Copernicus Sentinel-2 Cloud Masks Obtained from MAJA, Sen2Cor, and FMask Processors Using Reference Cloud Masks Generated with a Supervised Active Learning Procedure. Remote Sens. 2019, 11, 433. [Google Scholar] [CrossRef] [Green Version]

- Clerc, S.; Devignot, O.; Pessiot, L.; MPC Team S2 MPC. Level 2A Data Quality Report. Available online: https://sentinel.esa.int/documents/247904/685211/Sentinel-2-L2A-Data-Quality-Report (accessed on 25 August 2020).

- Forkel, M.; Migliavacca, M.; Thonicke, K.; Reichstein, M.; Schaphoff, S.; Weber, U.; Carvalhais, N. Codominant water control on global interannual variability and trends in land surface phenology and greenness. Glob. Chang. Biol. 2015, 21, 3414–3435. [Google Scholar] [CrossRef]

- Kandasamy, S.; Baret, F.; Verger, A.; Neveux, P.; Weiss, M. A comparison of methods for smoothing and gap filling time series of remote sensing observations—Application to MODIS LAI products. Biogeosciences 2013, 10, 4055–4071. [Google Scholar] [CrossRef] [Green Version]

- Gao, F.; Morisette, J.T.; Wolfe, R.E.; Ederer, G.; Pedelty, J.; Masuoka, E.; Myneni, R.; Tan, B.; Nightingale, J. An algorithm to produce temporally and spatially continuous MODIS-LAI time series. IEEE Geosci. Remote Sens. Lett. 2008, 5, 60–64. [Google Scholar] [CrossRef]

- Gerber, F.; De Jong, R.; Schaepman, M.E.; Schaepman-Strub, G.; Furrer, R. Predicting Missing Values in Spatio-Temporal Remote Sensing Data. IEEE Trans. Geosci. Remote Sens. 2018, 56, 2841–2853. [Google Scholar] [CrossRef] [Green Version]

- Roerink, G.J.; Menenti, M.; Verhoef, W. Reconstructing cloudfree NDVI composites using Fourier analysis of time series. Int. J. Remote Sens. 2000, 21, 1911–1917. [Google Scholar] [CrossRef]

- Jeganathan, C.; Dash, J.; Atkinson, P.M. Characterising the spatial pattern of phenology for the tropical vegetation of India using multi-temporal MERIS chlorophyll data. Landsc. Ecol. 2010, 25, 1125–1141. [Google Scholar] [CrossRef]

- Wu, M.; Yang, C.; Song, X.; Hoffmann, W.C.; Huang, W.; Niu, Z.; Wang, C.; Li, W.; Yu, B. Monitoring cotton root rot by synthetic Sentinel-2 NDVI time series using improved spatial and temporal data fusion. Sci. Rep. 2018, 8, 2016. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Solano-Correa, Y.T.; Bovolo, F.; Bruzzone, L.; Fernandez-Prieto, D. A Method for the Analysis of Small Crop Fields in Sentinel-2 Dense Time Series. IEEE Trans. Geosci. Remote Sens. 2020, 58, 2150–2164. [Google Scholar] [CrossRef]

- Hao, P.-y.; Tang, H.-j.; Chen, Z.-x.; Yu, L.; Wu, M.-q. High resolution crop intensity mapping using harmonized Landsat-8 and Sentinel-2 data. J. Integr. Agric. 2019, 18, 2883–2897. [Google Scholar] [CrossRef]

- Miura, T.; Nagai, S. Landslide detection with himawari-8 geostationary satellite data: A case study of a torrential rain event in Kyushu, Japan. Remote Sens. 2020, 12, 1734. [Google Scholar] [CrossRef]

- Fensholt, R.; Sandholt, I.; Stisen, S.; Tucker, C. Analysing NDVI for the African continent using the geostationary meteosat second generation SEVIRI sensor. Remote Sens. Environ. 2006, 101, 212–229. [Google Scholar] [CrossRef]

- Miura, T.; Nagai, S.; Takeuchi, M.; Ichii, K.; Yoshioka, H. Improved Characterisation of Vegetation and Land Surface Seasonal Dynamics in Central Japan with Himawari-8 Hypertemporal Data. Sci. Rep. 2019, 9, 15692. [Google Scholar] [CrossRef] [Green Version]

- Sobrino, J.A.; Julien, Y.; Soria, G. Phenology Estimation From Meteosat Second Generation Data. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2013, 6, 1653–1659. [Google Scholar] [CrossRef]

- Yan, D.; Zhang, X.; Nagai, S.; Yu, Y.; Akitsu, T.; Nasahara, K.N.; Ide, R.; Maeda, T. Evaluating land surface phenology from the Advanced Himawari Imager using observations from MODIS and the Phenological Eyes Network. Int. J. Appl. Earth Obs. Geoinf. 2019, 79, 71–83. [Google Scholar] [CrossRef]

- Roy, D.P.; Kovalskyy, V.; Zhang, H.K.; Vermote, E.F.; Yan, L.; Kumar, S.S.; Egorov, A. Characterization of Landsat-7 to Landsat-8 reflective wavelength and normalized difference vegetation index continuity. Remote Sens. Environ. 2016, 185, 57–70. [Google Scholar] [CrossRef] [Green Version]

- Thenkabail, P.S. Global croplands and their importance for water and food security in the twenty-first century: Towards an ever green revolution that combines a second green revolution with a blue revolution. Remote Sens. 2010, 2, 2305–2312. [Google Scholar] [CrossRef] [Green Version]

- Moran, M.S.; Inoue, Y.; Barnes, E.M. Opportunities and limitations for image-based remote sensing in precision crop management. Remote Sens. Environ. 1997, 61, 319–346. [Google Scholar] [CrossRef]

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Misra, G.; Cawkwell, F.; Wingler, A. Status of Phenological Research Using Sentinel-2 Data: A Review. Remote Sens. 2020, 12, 2760. https://doi.org/10.3390/rs12172760

Misra G, Cawkwell F, Wingler A. Status of Phenological Research Using Sentinel-2 Data: A Review. Remote Sensing. 2020; 12(17):2760. https://doi.org/10.3390/rs12172760

Chicago/Turabian StyleMisra, Gourav, Fiona Cawkwell, and Astrid Wingler. 2020. "Status of Phenological Research Using Sentinel-2 Data: A Review" Remote Sensing 12, no. 17: 2760. https://doi.org/10.3390/rs12172760