1. Introduction

Energy is the material basis for the progress and development of human society. Electricity is a very convenient way to transfer energy, and it has been adapted to a huge and growing number of uses. Nowadays, global electricity consumption is increasing year by year, and people are facing an increasingly severe energy shortage problem. The management and optimization of energy usage can effectively reduce energy consumption. Knowing the detailed usage of energy can effectively help residents optimize electric energy management. Some research [

1] pointed out that disaggregated information can help householders to reduce energy consumption by 15%. Therefore, household electrical energy consumption analysis has gradually become a research field that attracts much attention.

In terms of household energy management, load monitoring is an approach that helps residents save energy. The purpose of load monitoring is to obtain power data of various appliances in the entire house. Power companies can use load monitoring approach to get information about the users’ energy consumption composition and strengthen load-side management. Such energy consumption information can guide the users to schedule the usage of the high-power non-emergency load to adjust the peak-to-valley difference and reduce network losses. Currently, researchers divide load monitoring into two categories: intrusive and non-intrusive [

2]. Intrusive load monitoring uses sensors installed on each line to collect data for each appliance’s status and power. The result of this scheme is relatively accurate, but it needs to install a large number of sensors in residents’ houses with the high cost and low feasibility. Non-intrusive load monitoring (NILM) is also called energy disaggregation, which does not need to install sensors on all lines. The sensors only need to monitor the voltage, current, and other signals at the entrance of the power system, which significantly reduces the hardware cost and installation complexity. NILM can be used for home energy management systems (HEMS) and ambient assisted living (AAL) [

3,

4,

5].

Hart first proposed the concept of NILM and its solutions in the 1990s [

6]. Many researchers have proposed various solutions ever since. Ways to solve the NILM problem can be divided into low-frequency approaches and high-frequency approaches according to the sampling rate of the input signals. Low-frequency approaches use data (i.e., features) produced at rates lower than the alternating current (AC) base frequency, and high-frequency approaches use data sampled at rates higher than the AC base frequency [

7]. Although more information can be extracted from high-frequency data [

8], the required data acquisition equipment is more expensive. Therefore, this article studies the low-frequency approaches.

The main stages in NILM are: data collection, event detection, feature extraction, and load identification [

9]. Ruzzelli et al. [

10] proposed a method for NILM using the steady-state voltage information (i.e., peak value, average value, and standard deviation) and its waveform characteristics. Hassan et al. [

11] used voltage-current traces for NILM and pointed out that using the combination of voltage and current traces is more straightforward to distinguish among different appliances than using voltage waveforms or current waveforms individually. Figueiredo et al. [

12] proposed a non-negative tensor disaggregation method to extract features. They used the extracted features to perform energy disaggregation. Batra et al. [

13] regarded NILM as a combinatorial optimization problem and proposed an algorithm to solve this problem. Some researchers are inspired by speech recognition and used hidden Markov models and their variants to solve this problem [

14,

15]. Some researchers [

16,

17] have also proposed event-based disaggregation methods. They first extracted the rising/falling edge characteristics. They then used different classification methods to classify the features to infer the appliances’ power, including support vector machines (SVM), fuzzy logic (FL), Naive Bayesian (NB), K-means, and decision tree (DT).

In recent years, with the continuous breakthroughs in computer vision, speech recognition, natural language processing, and other fields using deep learning, many scholars have also begun to use deep learning methods to solve the NILM problem. In 2015, Kelly et al. [

18] used long short-term memory (LSTM), denoising autoencoder (DAE), and other deep neural networks to solve the NILM problem with low-frequency signals. Nascimento [

19] used convolutional neural network (CNN) and recurrent neural network (RNN) for energy disaggregation. Kim et al. [

20] applied gated recurrent unit (GRU) to NILM. Zhang et al. [

21] proposed a sequence-to-point (Seq2point) method, which used mains data to generate the model’s input through a sliding window. The model’s output is the estimated power of the target appliance corresponding to the input data at the middle of the time. Pan et al. [

22] applied generative adversarial network (GAN) to NILM and proposed a sequence-to-subsequence (Seq2subseq) method based on conditional GAN (cGAN). Puente et al. [

23] proposed an unsupervised disaggregation method, which can identify the behavior of each appliance from mains power data through soft computing technology. The method proposed in this work establishes a box model composed of a sequence of rectangles of different heights (powers) and widths (times) to detect power changes. Piccialli et al. [

24] combined the power and status information of the appliance and used a deep neural network that combines a regression sub-network with a classification sub-network to solve the NILM problem. The deep neural network used regression sub-network to infer the appliance’s power and classification sub-network to infer the state of the appliance. This work also added the attention mechanism to the deep neural network to improve the generalization ability of the neural network. Lee et al. [

25] proposed an auto-encoder compression model based on the frequency selection method for NILM in the Internet of Things (IoT) environment, which can improve the reconstruction quality while maintaining the compression ratio (CR).

All of the above methods regard the models used to infer different appliances as independent models, and there is no correlation between the models. Jia et al. [

26] proposed a tree structure model (treeCNN) for NILM, which considers the relation of models for different target appliances. TreeCNN placed different models as sub-models in the tree structure nodes to generate an overall model, which maintained the relative independence of each sub-model and established a connection between them. Although this method is called a tree structure method, the sub-models in treeCNN are all in a chain. Therefore, in this article, we call this disaggregation method the single-chain disaggregation method for NILM (SC-NILM). In [

26], a brute-force method and a greedy method are proposed to obtain the single-chain. For the convenience of description, in this article, we will call them Opt-SC and Gre-SC, respectively. Batra et al. [

27] developed a non-intrusive load monitoring toolkit (NILMTK) to facilitate researchers to use existing public datasets and quickly implement their ideas. At present, the toolkit integrates a total of 12 NILM algorithms.

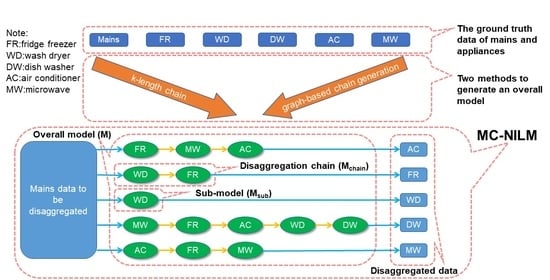

When performing energy disaggregation, most researchers input mains data into a specific model, and the model outputs the inferred value of the power of the target appliance. In other words, inferring the power of multiple target appliances requires many independent models. In this article, we use the existing model for individual target appliances as a sub-model. Furthermore, multiple sub-models constitute multiple chains. The output of the end node of each chain is an inferred value of the power of the different appliances. The chains constitute an overall model, which we call the MC-NILM. Due to the high time cost of using the brute-force method to obtain the MC-NILM with the best performance, this article proposes two solutions to reduce complexity. The first approach is to reduce the total number of MC-NILM by limiting the maximum length of chains. This solution can reduce the search space when using the brute-force method to search for the best-performing MC-NILM. The second approach is to evaluate the relative position of each pair of sub-models in a chain for a target appliance and use this information to guide the searching of chain structure with a graph-based algorithm (GBA). We tested MC-NILM on two public datasets, Dataport and UK-DALE, and used different metrics to evaluate performance. Experimental results show that our MC-NILM is better than several baselines, including Opt-SC and Gre-SC. The performance gap between our chain searching methods and the exhaustive search is close.

The main contributions of this paper are as follows:

- (1)

We proposed a multi-chain energy disaggregation method that considers the relationship between appliances for energy disaggregation and constructs a separate energy disaggregation chain for each appliance;

- (2)

We proposed two methods to reduce the complexity of the search for MC-NILM structure;

- (3)

Our experimental results demonstrated that the MC-NILM method is a general framework to leverage the existing NILM algorithms as sub-models and improve the overall performance of the original algorithms.

This article is organized as follows.

Section 2 describes the NILM problem.

Section 3 presents our MC-NILM framework and two methods to reduce the complexity of obtaining MC-NILM.

Section 4 describes the process of the experiment and the results obtained. Finally,

Section 5 concludes this article.

3. MC-NILM

3.1. Overview

This article proposes the MC-NILM based on the following idea. When inferring the power of the target appliance

, the data of other appliances in the mains acts as interference. If the data of other appliances are removed from the input data as much as possible, the power of

can be inferred from mains more easily. This article uses an iterative method with a chain structure to remove the power of non-target appliances from the mains gradually. The function of nodes in the chain except the end node is to infer the power of other appliances except

. We subtract the inferred power from the input data of the node as the input data of the subsequent node. The output of the end node is the inferred power of the target appliance

. This method simplifies the input data of the end node and improves the ability of the end node to infer the power of

. The permutation of other appliances in the chain affects the inferred output about

. Since each appliance has its unique characteristics and energy consumption pattern, the optimal chains for different target appliances are also different. Therefore, we need to use multiple chains to infer the power of multiple target appliances, which is called MC-NILM. At present, the most similar to our idea is treeCNN [

26]. However, the treeCNN uses a single chain for all target appliances (SC-NILM). As will be shown later, our proposed MC-NILM method can achieve better performance.

Figure 1 shows a schematic diagram of the three disaggregation schemes. They are (a) the independent method adopted by most researchers, (b) the SC-NILM proposed in [

26], (c) the MC-NILM proposed in this article.

Figure 1 uses the five appliances studied in [

26] to illustrate the three schemes.

In MC-NILM, each appliance has its disaggregation chain. The set of multiple chains is denoted by , where denotes the disaggregation chain for . is an ordered list of sub-models. Furthermore, we use to represent a sub-model used to infer the power of from the power of the corresponding components in , where Z is a collection of all mains components including target appliances, unconcerned appliances and noise, is a subset of A, represents the set Z subtract the power of appliances in set , i is the index of the appliance. In particular, when is an empty set, is abbreviated as Z, and represents the set formed by taking out the appliance from the set Z. In addition, this article uses to represent the performance of .

The overall process of MC-NILM to infer the power of the target appliances is as follows:

We divide the whole dataset into three parts: sub-model training dataset , multi-chain structure search dataset , and performance testing dataset ;

We use to train multiple sub-models, which can be used to form disaggregation chains;

We use to search for the optimal multi-chain model for the target appliances, which is denoted by as a whole;

We evaluate the performance of on .

Next, we will introduce the details of the MC-NILM.

3.2. Sub-Model Training

The MC-NILM proposed in this article consists of sub-models that can be created by any existing NILM method. As mentioned in the previous subsection, the first step in creating an MC-NILM is to train sub-models. For ease of description, we use the red node of an air conditioner (AC) in

Figure 1c to illustrate the training process of the sub-model. In the training stage of the sub-model, the feature is ground truth power data obtained by subtracting the fridge freezer (FR) and microwave (MW) power data from the mains data. The label is the ground truth power data of the AC. In the sub-model’s inference stage, the sub-model’s input is the data obtained by gradually subtracting the output value of the node before the sub-model from the mains. The input of the sub-model is the inferred value of the power of the FR and the MW, and the output is the inferred value of the power of AC. This approach can reduce the complexity of the input data of the sub-model and reduce the difficulty of the sub-model training process.

3.3. Energy Disaggregation in a Chain

Figure 1c illustrates an example of the MC-NILM. When inferring the power of the target appliances, the MC-NILM uses different chains to infer the power of different target appliances. The input for all chains is the mains power. Along a particular chain, the powers of several other appliances except for the target one are inferred with sub-models and extracted from the mains power iteratively. The output at the end of the chain is then the inferred power of the target appliance. In this way, due to its multi-chain structure, the MC-NILM can obtain power inference data for each target appliance through mains. Algorithm 1 describes the details of the MC-NILM.

| Algorithm 1: Process of inferring power of appliance by MC-NILM |

![Energies 14 04331 i001]() |

3.4. Complexity Analysis

Here we analyze the complexity of the brute-force method to search for the optimal MC-NILM model. Let us calculate the number of models generated by the MC-NILM method and the number of sub-models that need to be trained. We consider the case where the number of target appliances that need to infer power is N. For a particular chain of , during the training process, the target output of the sub-model in the end node of this chain remains unchanged, but its input has many possibilities, and the length of the chain varies from 1 to N. When the chain length is , this method needs to select appliances from appliances as the predecessors of the end node, which results in a total of different chains. Because of , there are a total of kinds of chains for . When inferring the power of N appliance, we need to evaluate the performance of models and select the MC-NILM model with the best performance. For these whole models, we need to train sub-models.

For example, the construction of all the MC-NILM models for 5 types of target appliances requires training 80 sub-models. The greater the number of sub-models, the more time and space are required to create the sub-models. The MC-NILM can use a variety of NILM methods to create sub-models. When using deep learning methods, model training is exceptionally time-consuming. Therefore, we need to reduce the complexity of MC-NILM.

3.5. Complexity Reduction

From the previous analysis, obtaining an MC-NILM with the best performance through the brute-force method requires creating a large number of sub-models, and the complexity and time cost are too high. Therefore, we propose two solutions to reduce the complexity of MC-NILM.

3.5.1. K-Length Chain

We can reduce the total number of models by limiting the maximum length of the chains. When we limit the maximum length of the chains to , the MC-NILM method requires different sub-models to generate different models. Reducing k can reduce the number of MC-NILM and reduce the number of sub-models required. Meanwhile, limiting k may affect the performance of the MC-NILM. In the extreme case, when , the MC-NILM converts to the existing independent model with low complexity and poor performance. Therefore, there is a trade-off between complexity and performance.

3.5.2. Graph-Based Chain Generation

In addition, we proposes a graph-based chain generation algorithm (GBA) for obtaining MC-NILM. This solution generates an MC-NILM based on the relationship between the two appliances. First, we train the sub-model for all N target appliances and obtain . The sub-model uses the same metrics as the MC-NILM, so the performance of the MC-NILM is optimized through the performance data of the sub-model. Subsequently, for each target appliance , we use the remaining appliance as its previous appliance training sub-model , and then combine and into a chain and then obtain . Comparing and , if is better than , it means that when inferring the power of , it is better to extract before than to extract directly. To obtain the MC-NILM, we convert the information about the relationship of these paired appliances into a graph structure.

We regard each appliance as a vertex of the graph. The weight of the directed edge connecting the vertex j () and the i () is the degree of optimization of compared to . A negative value of means that the performance of is better than . The specific calculation method is related to the selection of metrics. Although there are many different chains in MC-NILM, the input of all chains is the same data. To obtain NILM, We add a special vertex () to the graph, whose outgoing edges with zero weight point to all other vertices. The structure of the MC-NILM is obtained by finding the paths from the to all other vertices, as shown in Algorithm 2. The paths have two properties:

- (1)

Because an appliance cannot exist more than once in a chain, these paths should be simple paths with non-cyclic.

- (2)

These paths should be the shortest paths from the to the of the target appliances to ensure that the performance obtained by inferring the power of the target appliance through the paths is the best.

It can be seen from Algorithm 2 that in the process of using GBA to obtain MC-NILM, first, we need to train sub-models for graph construction for N appliances (line 7 to 9). Then, after the shortest paths (chains) for appliances are determined, we need to train the remaining missing sub-models within these paths (line 15 to 22), and the number of trained sub-models here may vary between 0 and . Compared with the exhaustive method, this method can significantly reduce the number of sub-models that need to be trained.

| Algorithm 2: Graph-based algorithm (GBA) for chain generation. |

![Energies 14 04331 i002]() |